---

title: "Computational Vexillology"

subtitle: "Decoding National Aesthetics Through Data Science"

author: "Alejandro Treny"

date: today

format:

html:

theme: flatly

code-fold: true

code-tools: true

toc: true

toc-location: left

toc-depth: 3

number-sections: true

smooth-scroll: true

page-layout: article

# CAMBIO CRUCIAL: De 'true' a 'false'

embed-resources: false

# OPCIONAL: Organiza las librerías en una carpeta limpia

lib-dir: libs

mainfont: "Palatino, Georgia, serif"

include-in-header:

- text: |

<style>

.content { max-width: 860px; margin-left: auto; margin-right: auto; }

#quarto-content > * { max-width: 860px; }

.cell-output-display { max-width: 900px; margin-left: auto; margin-right: auto; }

</style>

execute:

warning: false

message: false

---

## Introduction {.unnumbered}

What if we could treat national flags not as art, but as **high-dimensional data**? Every pixel encodes a decision: a color chosen, a symbol placed, a geometry defined. Collectively, the \~200 sovereign flags of the world form a rich visual corpus shaped by centuries of history, religion, revolution, and geography.

This project, **Computational Vexillology**, sets out to answer a provocative question:

> *Does the design of a country's flag predict its destiny?*

We will convert every flag into a mathematical fingerprint using two complementary lenses:

- **Computer Vision** (OpenCV, scikit-image): extracting explicit, interpretable metrics like color warmth, visual entropy, and structural geometry.

- **Deep Learning** (ResNet50): extracting latent style embeddings that capture abstract patterns a human might miss.

With these fingerprints in hand, we will:

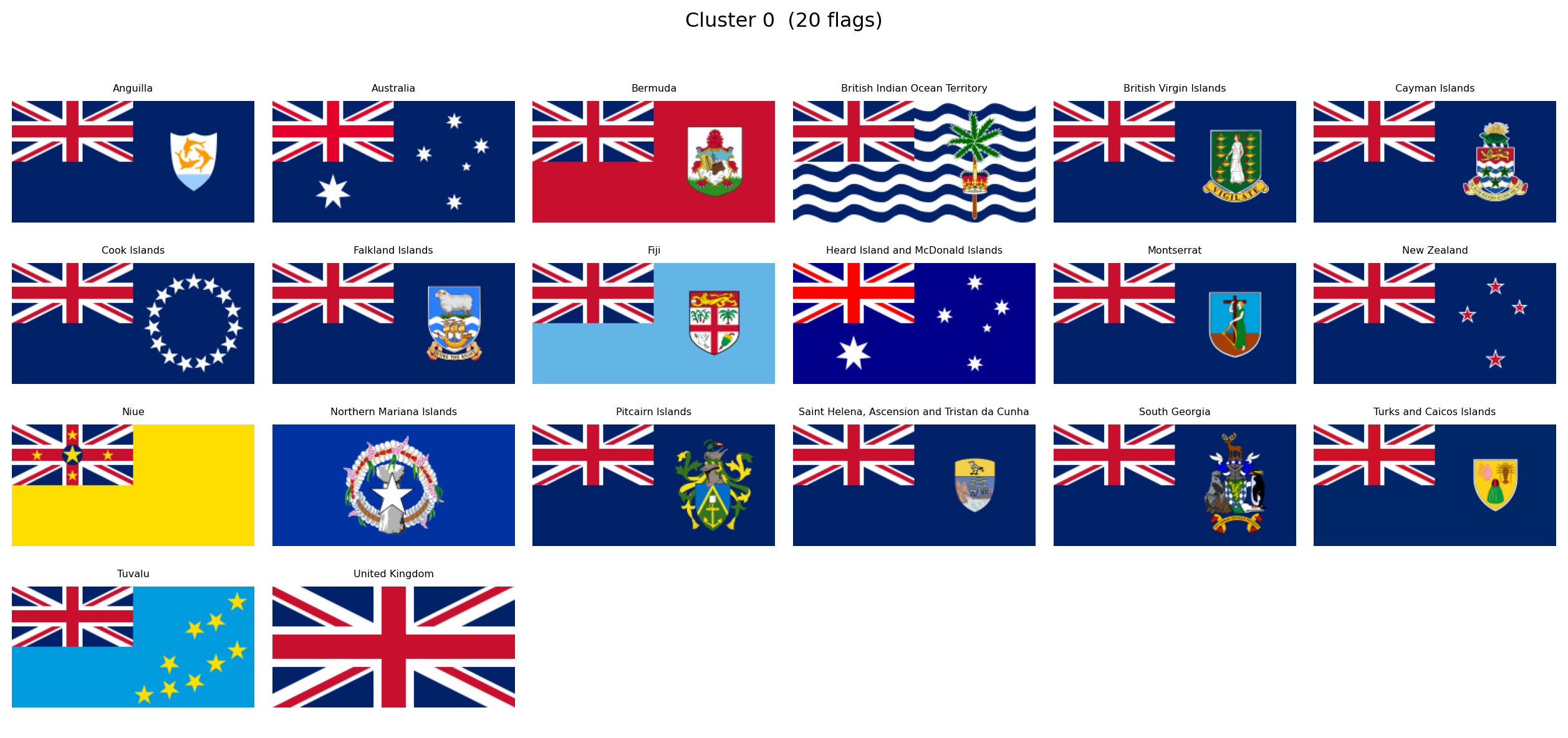

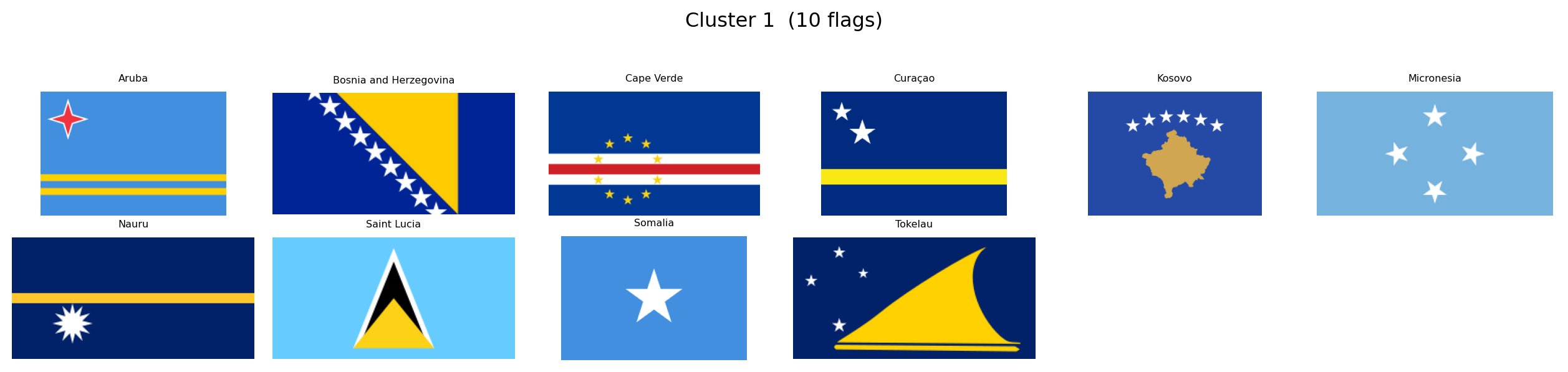

1. **Map the Design Universe**, using UMAP to project flags into a 2D space where visually similar flags cluster together.

2. **Rediscover History**, testing whether unsupervised clustering can "accidentally" recover colonial empires, religious blocs, and pan-regional movements.

3. **Test Scientific Hypotheses**, correlating flag aesthetics with geography, economics, and politics.

The entire analysis is contained in this document: reproducible code, interactive visualizations, and statistical findings in a single artifact.

### Research Questions

Several hypotheses will guide our exploration. Among them:

- **Solar Determinism**: do countries closer to the equator use "hotter" colors (reds, yellows) while northern nations prefer cooler palettes?

- **Complexity of Development**: does national wealth correlate with flag simplicity, mirroring the minimalist trend in modern corporate branding?

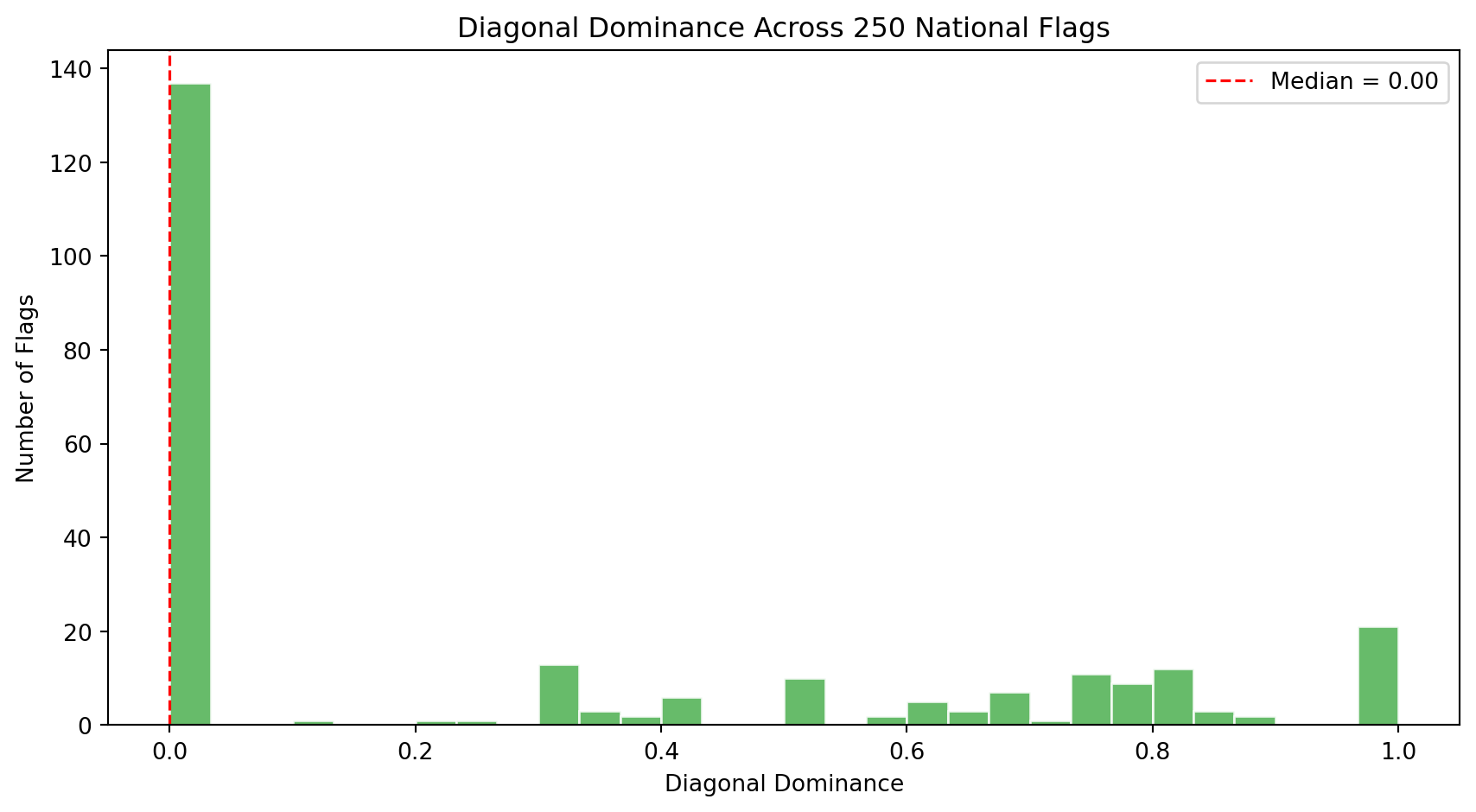

- **The Revolutionary Diagonal**: are diagonal lines and dynamic geometries more common in flags born from revolution or inequality?

- **The Colonial Ghost**: can an algorithm, grouping flags purely by visual similarity, rediscover the footprint of the British Empire or the Crescent bloc?

These are starting points, not boundaries. As the data reveals its structure, we will follow wherever it leads.

```{python}

#| label: setup

#| code-summary: "Import core libraries"

import numpy as np

import pandas as pd

import requests

import matplotlib.pyplot as plt

import plotly.express as px

import cairosvg

from PIL import Image

from pathlib import Path

from itables import show as itshow

import io

import warnings

warnings.filterwarnings("ignore")

print(f"NumPy: {np.__version__}")

print(f"Pandas: {pd.__version__}")

print(f"Pillow: {Image.__version__}")

print("CairoSVG: ✓")

```

With our environment ready, we begin by assembling the visual corpus.

## Building the Flag Corpus

The first phase of this project is purely visual. Before introducing any socio-economic or geographic data, we want to let the flags speak for themselves. What patterns emerge when we look at 250 national designs as raw geometry and color?

Our source is [FlagCDN](https://flagcdn.com), a public CDN that serves every national flag in **SVG format**, giving us precise vector definitions of colors and shapes rather than lossy rasterized pixels. We pair these with a minimal country index from the [REST Countries API](https://restcountries.com), just enough to label each flag with a name and ISO code.

### Country Index

We first build a lightweight index of all countries: just the ISO alpha-2 code, the common name, and independence status. This gives us the list of flags to download and a way to label them. All other metadata (coordinates, inequality, population) will be loaded later when we turn to hypothesis testing.

```{python}

#| label: fetch-index

#| code-summary: "Build country index from REST Countries API"

fields = "name,cca2,independent"

response = requests.get(f"https://restcountries.com/v3.1/all?fields={fields}")

countries_raw = response.json()

df_index = pd.DataFrame([

{

"code": c["cca2"].lower(),

"name": c["name"]["common"],

"independent": c["independent"],

}

for c in countries_raw

]).sort_values("name").reset_index(drop=True)

print(f"{'Total entries:':<25} {len(df_index)}")

print(f"{'Independent states:':<25} {df_index['independent'].sum()}")

print(f"{'Territories/other:':<25} {(~df_index['independent']).sum()}")

itshow(df_index, lengthMenu=[5, 10, 25, 50], pageLength=5)

```

The API returns 250 entries: 195 recognized independent states and 55 territories or dependencies. Every entry has a unique two-letter ISO code that will serve as our primary key throughout the analysis.

### Downloading Flags as SVG

We download flags in **SVG** (Scalable Vector Graphics) rather than PNG. SVGs encode colors as exact hex values and shapes as mathematical paths, which means our color analysis operates on precise definitions rather than compression artifacts. When pixel-level processing is needed later (for neural networks, for instance), we rasterize the SVGs at a controlled resolution using `cairosvg`.

```{python}

#| label: download-flags

#| code-summary: "Download SVG flags from FlagCDN"

flag_dir = Path("data/flags_svg")

flag_dir.mkdir(parents=True, exist_ok=True)

success, failed = 0, []

for code in df_index["code"]:

path = flag_dir / f"{code}.svg"

if path.exists():

success += 1

continue

try:

r = requests.get(f"https://flagcdn.com/{code}.svg", timeout=10)

if r.status_code == 200:

path.write_bytes(r.content)

success += 1

else:

failed.append(code)

except Exception:

failed.append(code)

print(f"SVG flags downloaded: {success} / {len(df_index)}")

if failed:

print(f"Failed: {failed}")

```

### A First Look

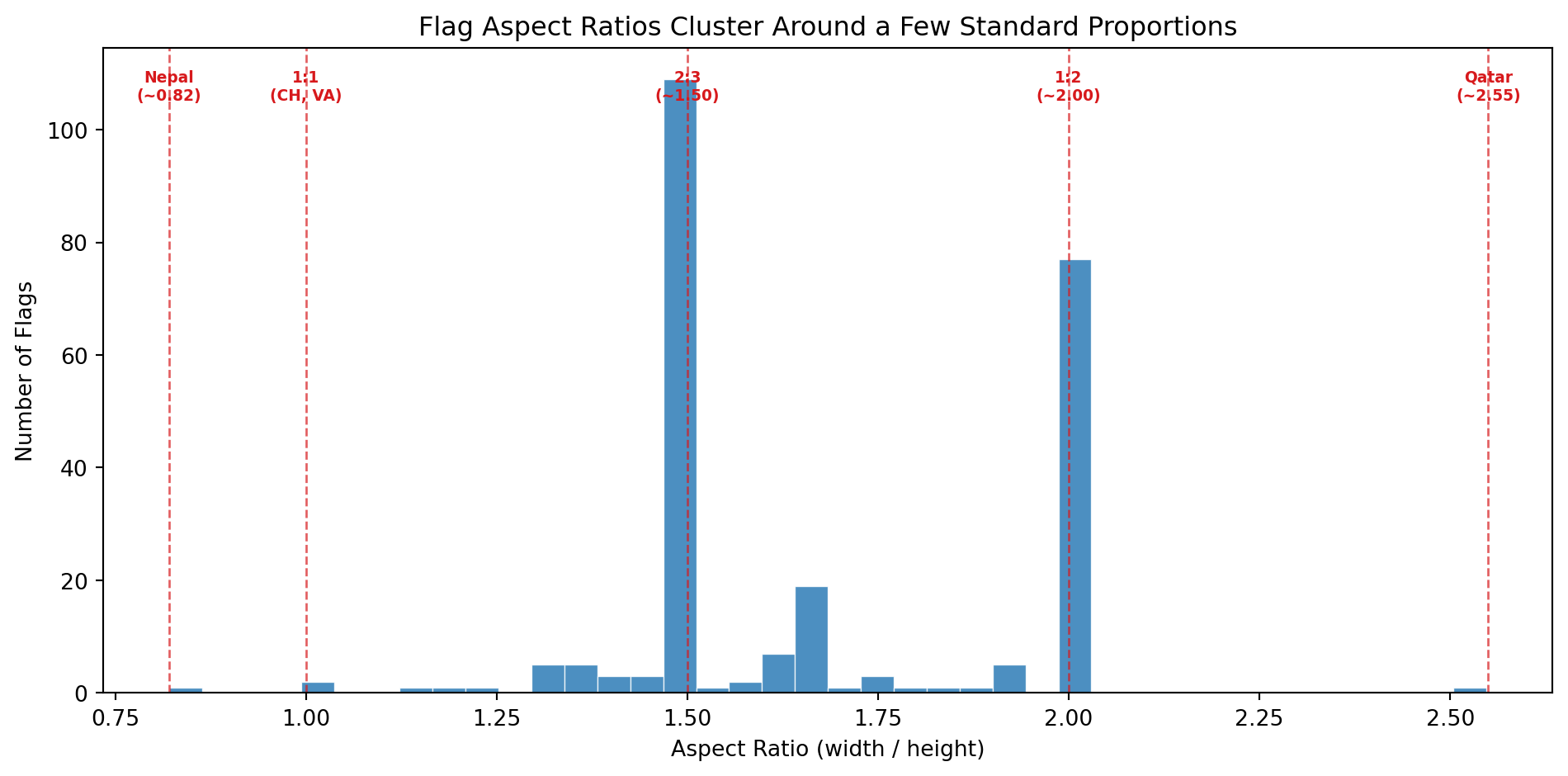

To confirm the pipeline works, let's rasterize a handful of flags and display them. This also illustrates the diversity of aspect ratios we are dealing with: most flags are 2:3 or 1:2 rectangles, but Nepal's double-pennant is an entirely different shape.

```{python}

#| label: flag-preview

#| code-summary: "Preview a sample of downloaded flags"

#| fig-cap: "A sample of six flags rasterized from SVG at 640px width. Note the variation in aspect ratios."

sample_codes = ["de", "es", "br", "np", "za", "gb"]

sample_names = {c: df_index.loc[df_index["code"] == c, "name"].values[0] for c in sample_codes}

fig, axes = plt.subplots(2, 3, figsize=(12, 5))

for ax, code in zip(axes.flat, sample_codes):

svg_path = flag_dir / f"{code}.svg"

png_data = cairosvg.svg2png(url=str(svg_path), output_width=640)

img = Image.open(io.BytesIO(png_data)).convert("RGB")

ax.set_facecolor("#f0f0f0")

ax.imshow(np.array(img), aspect="equal")

ax.set_title(f"{sample_names[code]} ({code.upper()})", fontsize=11)

ax.axis("off")

plt.tight_layout()

plt.show()

```

All 250 flags are now stored locally as SVGs. In the next section we begin **feature extraction**: converting each flag into a numerical fingerprint that captures its color palette, visual complexity, and geometric structure.

## Feature Extraction

A flag is an image. An image is a grid of pixels. To compare flags mathematically, we need to reduce each image to a fixed-length vector of numbers, a **fingerprint**, where each number captures one meaningful property of the design. The choice of *which* properties to measure is the most important decision in the entire project, because downstream analyses (distances, clusters, hypothesis tests) can only discover patterns that our features are capable of encoding.

We draw our feature set from three sources:

- **Vexillological design principles**, particularly the five rules published by the North American Vexillological Association (NAVA) in *Good Flag, Bad Flag*: keep it simple, use meaningful symbolism, use two or three basic colors, no lettering or seals, and be distinctive or be related.

- **Flag design taxonomy**, as catalogued by Wikipedia's *List of National Flags by Design* and *Flag Families*: the systematic classification of flags by structural elements (stripes, crosses, triangles, crescents, stars) and by historical lineage (Pan-African, Pan-Arab, Pan-Slavic, Nordic Cross, British Ensign, etc.).

- **Computer vision fundamentals**: standard image descriptors from information theory (Shannon entropy), edge detection (Canny), and line detection (Hough Transform) that quantify visual properties without any domain-specific assumptions.

The result is a set of **19 features** organized into five families. Below we describe each family, the metrics it contains, and the scientific or design rationale behind each one.

### Family 1: Color Palette (8 metrics)

Color is the most immediately visible property of any flag. The heraldic tradition defines a strict vocabulary of **tinctures**: metals (gold/argent, rendered as yellow and white) and colors (gules/red, azure/blue, vert/green, sable/black, purpure/purple). Nearly every national flag draws its palette from this classical set.

We measure color in the **HSV** (Hue, Saturation, Value) color space rather than RGB. HSV separates chromatic content (hue) from brightness (value) and intensity (saturation), which makes it much easier to define categories like "red" or "warm" in a way that matches human perception.

The eight color palette metrics are:

| Metric | Definition | Why it matters |

|--------|-----------|----------------|

| `warmth_score` | Fraction of chromatic pixels with warm hues (reds, oranges, yellows) | Directly tests the **Solar Determinism** hypothesis: do equatorial nations favor hotter colors? |

| `coolness_score` | Fraction of chromatic pixels with cool hues (blues, greens) | The complement of warmth. Nordic and maritime nations may cluster here. |

| `red_pct` | Fraction of total pixels that are red | Red is the single most common flag color worldwide, associated with blood, revolution, and courage. |

| `blue_pct` | Fraction of total pixels that are blue | Blue symbolizes sky, sea, freedom, and vigilance. Common in maritime and democratic traditions. |

| `green_pct` | Fraction of total pixels that are green | Green appears in Pan-African, Pan-Arab, and Islamic flag traditions. Also associated with land and agriculture. |

| `yellow_pct` | Fraction of total pixels that are yellow/gold | Gold represents wealth, sun, and generosity in heraldic terms. Dominant in African and South American flags. |

| `white_pct` | Fraction of total pixels that are white/silver | White symbolizes peace, purity, and snow. Also serves as a background or fimbriation (border) color. |

| `black_pct` | Fraction of total pixels that are black | Black represents determination, heritage, and mourning. Prominent in Pan-African and revolutionary flags. |

### Family 2: Color Complexity (3 metrics)

NAVA's third principle states: *"Use two or three basic colors from the standard color set."* This is a measurable claim. A flag with two dominant colors is simpler and more recognizable than one with seven. Beyond counting colors, we also want to know how much those colors *contrast* with each other (high contrast aids recognition at a distance, which is the entire functional purpose of a flag), and whether the palette leans toward the aggressive end of the spectrum.

| Metric | Definition | Why it matters |

|--------|-----------|----------------|

| `palette_complexity` | Number of significant color clusters found by K-Means quantization of the flag's pixel data. This is not the number of colors a human would name (Afghanistan = 4 to the eye, but 7 at the pixel level due to its detailed emblem). It measures chromatic variety including gradients, shading, and fine artwork. | Operationalizes NAVA's "2-3 colors" rule at the pixel level. Flags with detailed coats of arms, seals, or multi-shade emblems will score higher than clean geometric designs. |

| `color_contrast` | Maximum perceptual color distance (CIEDE2000) between any two dominant color clusters. Values typically range from 0 to 100, though highly chromatic pairs can slightly exceed 100. | High contrast (e.g. black on white, red on green) makes a flag readable from far away. Low contrast suggests a monochromatic or analogous palette. |

| `aggression_index` | Combined area fraction of red and black pixels | Tests the **Revolutionary Diagonal** hypothesis: are flags born from violent independence movements more red-and-black? Also correlates with the Pan-African color tradition. |

### Family 3: Visual Complexity (3 metrics)

How "busy" is a flag? A tricolor with three solid blocks of color is among the simplest possible designs. A flag with a detailed coat of arms, animals, text, and ornamental borders is visually complex. NAVA's first principle (*"Keep it simple: the flag should be so simple that a child can draw it from memory"*) and fourth principle (*"No lettering or seals"*) both relate to complexity.

We measure complexity from three complementary angles:

| Metric | Definition | Why it matters |

|--------|-----------|----------------|

| `visual_entropy` | Shannon entropy of the grayscale intensity histogram, in bits | An information-theoretic measure of pixel diversity. Simple flags (few gray levels) have low entropy; intricate designs (many gray levels from gradients, shadows, and detail) have high entropy. |

| `edge_density` | Fraction of pixels detected as edges by the Canny algorithm | A geometric measure of complexity. More edges mean more shapes, boundaries, and fine detail in the design. A solid tricolor has very few edges; a flag with a detailed eagle emblem has many. |

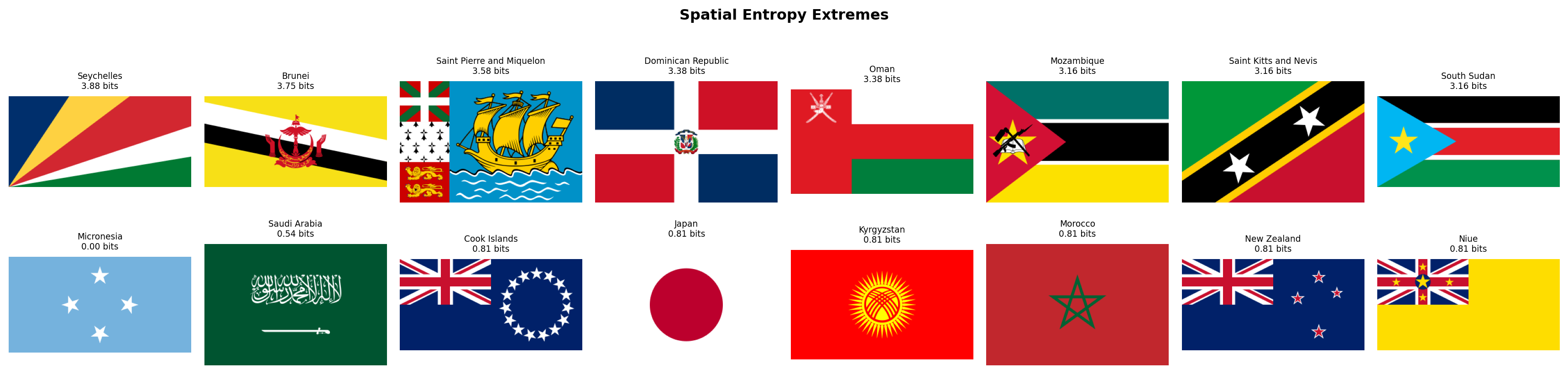

| `spatial_entropy` | Entropy of color distribution across a spatial grid (the flag divided into a 4x4 grid of cells) | Distinguishes between *distributed* complexity (patterns spread across the entire flag, like the USA's stars-and-stripes) and *localized* complexity (a single emblem on a plain background, like Japan's circle on white). Two flags can have identical `visual_entropy` but very different `spatial_entropy`. |

### Family 4: Geometric Structure (4 metrics)

Flag designs fall into well-known structural families: horizontal stripes (tribands, tricolors), vertical stripes, diagonal divisions, crosses, and more. These structural patterns carry historical meaning. Horizontal tricolors descend from the Dutch and French revolutionary traditions. Nordic crosses mark Scandinavian identity. Diagonal stripes are rarer and more dynamic, often signaling a break from colonial templates.

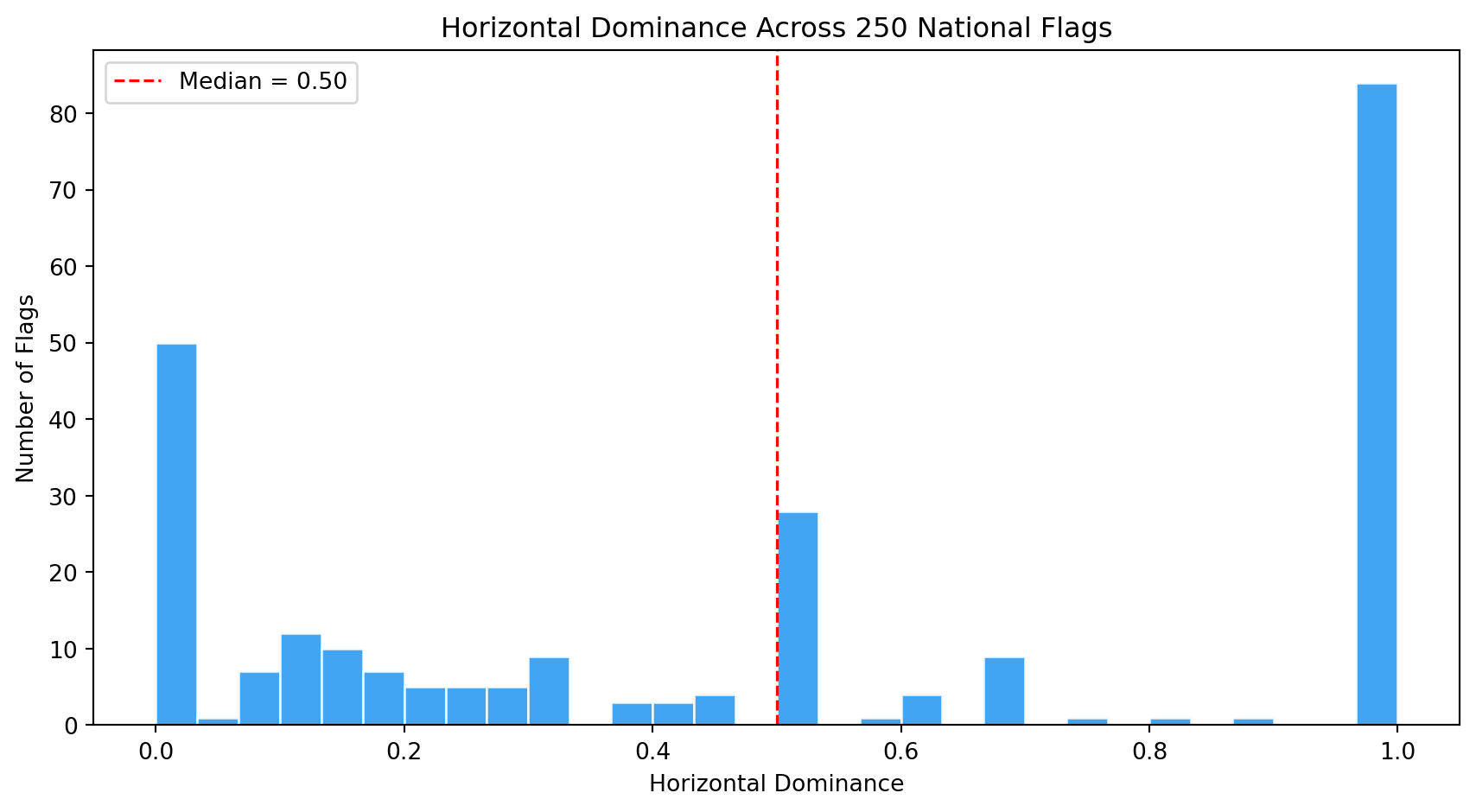

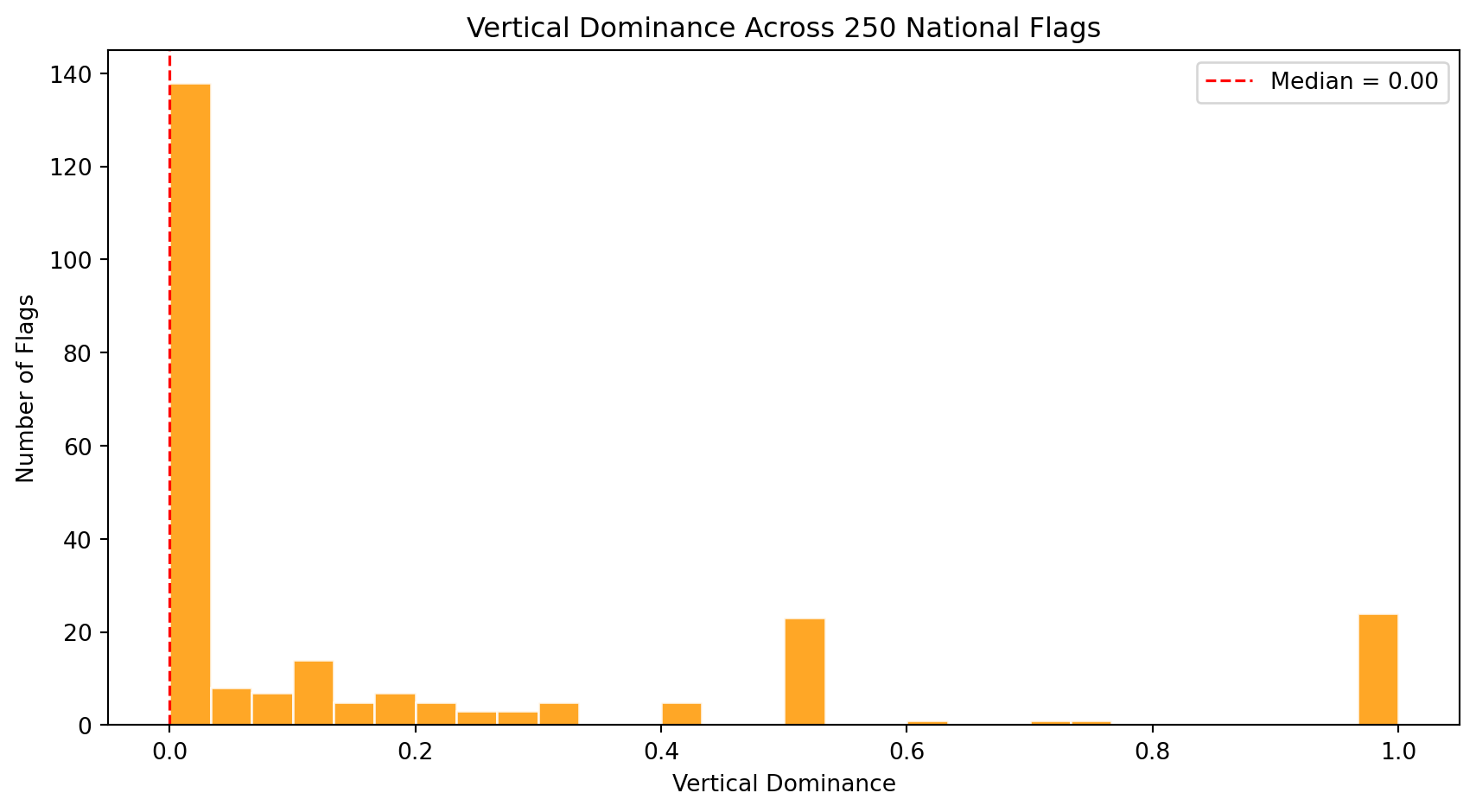

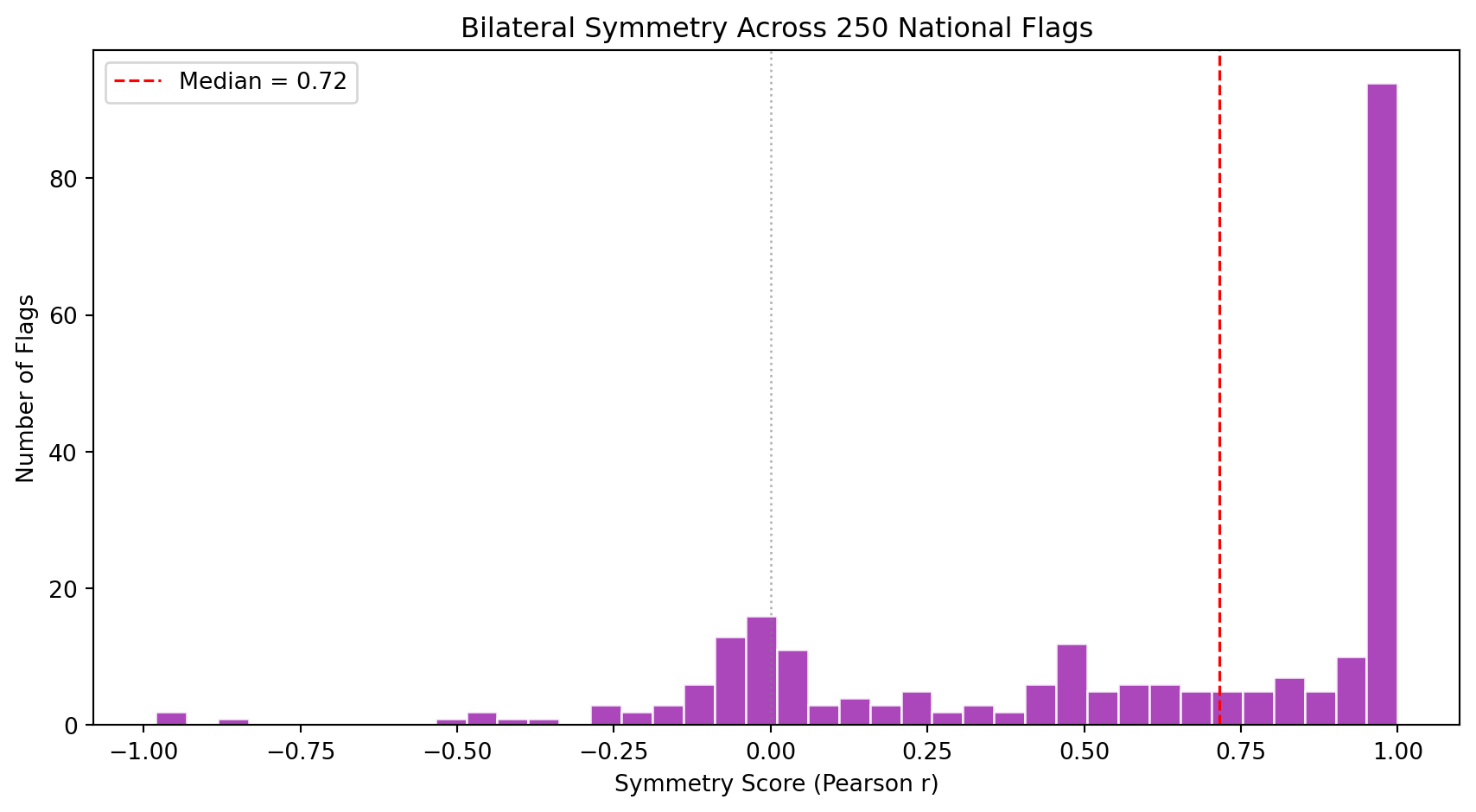

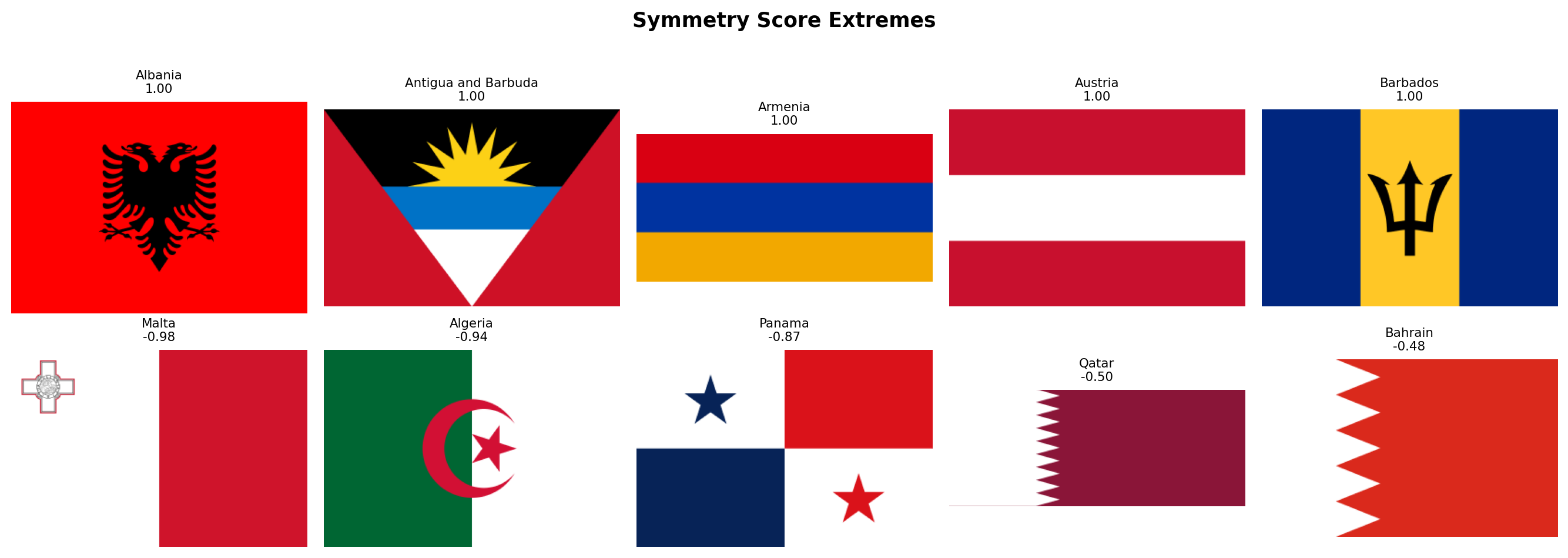

We detect dominant line angles in each flag using the **Hough Transform**, a classical computer vision algorithm that finds straight lines in an image. By classifying detected lines by their angle, we can quantify whether a flag's geometry is primarily horizontal, vertical, or diagonal. We also measure bilateral symmetry, since most flags are designed to look the same when reflected horizontally.

| Metric | Definition | Why it matters |

|--------|-----------|----------------|

| `horizontal_dominance` | Fraction of strong Hough lines that are near-horizontal (within 10 degrees of the horizon) | Captures membership in the triband/tricolor family, the single largest design family in the world. |

| `vertical_dominance` | Fraction of strong Hough lines that are near-vertical (within 10 degrees of the vertical axis) | Distinguishes vertical tricolors (French tradition: France, Italy, Belgium, Ireland) from horizontal tribands. |

| `diagonal_dominance` | Fraction of strong Hough lines that are neither horizontal nor vertical (the middle angular zone between 10 and 80 degrees) | Rare and visually dynamic. Tests the **Revolutionary Diagonal** hypothesis: flags like Tanzania, Namibia, and the DRC use diagonals. |

| `symmetry_score` | Pixel-wise correlation between the flag and its horizontal mirror image | Most flags are designed to be read from both sides. Asymmetric flags (Nepal, Bhutan, flags with off-center emblems like Portugal or Sri Lanka) are structural outliers. |

### Family 5: Aspect Ratio (1 metric)

The shape of a flag is one of its most fundamental design decisions, yet it is often overlooked in computational analyses that resize all flags to a fixed square.

| Metric | Definition | Why it matters |

|--------|-----------|----------------|

| `aspect_ratio` | Width divided by height of the rasterized flag | Most flags have a 2:3 ratio (~1.50) or 1:2 (~2.00). Switzerland and Vatican City are square (1.00). Qatar is extremely elongated (~2.55). Nepal is the only flag taller than wide (~0.82). This single number separates entire design traditions. |

### Summary

Altogether, these **19 features** span the space of what makes a flag visually distinctive. They are organized so that each family answers a different question:

| Family | Question | N |

|--------|----------|---|

| Color Palette | *What colors does this flag use?* | 8 |

| Color Complexity | *How chromatically complex is the palette, and how do its colors contrast?* | 3 |

| Visual Complexity | *How busy is the design?* | 3 |

| Geometric Structure | *What shapes and symmetries define its layout?* | 4 |

| Aspect Ratio | *What is the flag's shape?* | 1 |

| **Total** | | **19** |

In the following subsections we implement each family as a Python function, extract all metrics for every flag, and visualize the results one family at a time.

### Rasterization Helper

Before extracting any features, we need a utility function to convert SVG flags into pixel arrays. SVGs are vector graphics (mathematical descriptions of shapes), but our computer vision algorithms operate on **rasters** (grids of pixels). The function below uses `cairosvg` to render each SVG at a fixed width and returns a NumPy array in RGB format.

We choose a default width of 320 pixels. This is large enough to preserve fine detail (small stars, thin stripes) but small enough to keep computation fast across 250 flags. The height is determined automatically by the SVG's native aspect ratio, which means Nepal's flag will be taller than wide and Qatar's will be very elongated. This is intentional: we want to preserve the true geometry of each flag rather than distorting it into a fixed square.

```{python}

#| label: rasterize-helper

#| code-summary: "SVG to pixel array conversion"

import cv2

from scipy.stats import entropy as shannon_entropy

from skimage.feature import canny

from skimage.transform import hough_line, hough_line_peaks

def rasterize_flag(svg_path, width=320):

"""Convert an SVG flag file into a NumPy RGB array.

Parameters

----------

svg_path : str or Path

Path to the .svg file.

width : int

Target width in pixels. Height is computed from the SVG's

native aspect ratio, so the flag is never distorted.

Returns

-------

np.ndarray

RGB image as a uint8 array of shape (H, W, 3).

"""

png_data = cairosvg.svg2png(url=str(svg_path), output_width=width)

img = Image.open(io.BytesIO(png_data)).convert("RGB")

return np.array(img)

```

## Color Palette

We now implement the first metric family. The function below takes an RGB image and returns eight numbers describing its color composition.

**How it works, step by step:**

1. **Convert RGB to HSV.** The HSV color space separates hue (the "name" of the color, like red or blue), saturation (how vivid the color is), and value (how bright it is). This separation lets us define color categories using simple numeric ranges on the hue channel, which would be very awkward in RGB.

2. **Build a chromatic mask.** Not every pixel carries meaningful color information. Very dark pixels (low value) look black regardless of their hue, and very pale pixels (low saturation, high value) look white. We exclude these achromatic pixels when computing warmth and coolness scores, so that a flag with a large white area does not dilute its chromatic profile.

3. **Classify hues.** OpenCV encodes hue on a 0-179 scale (not 0-360). Red wraps around: hues near 0 *and* near 179 are both red. We define each color category as a range of hue values combined with minimum saturation and value thresholds to avoid false positives (a very dark, desaturated pixel with hue=120 is not really "green").

4. **Compute area fractions.** Each metric is simply the count of pixels matching a category divided by the total number of pixels (for individual colors) or by the number of chromatic pixels (for warmth/coolness).

```{python}

#| label: color-palette-fn

#| code-summary: "Color palette extraction function"

def compute_color_palette(img_rgb):

"""Extract 8 color palette metrics from an RGB flag image.

All hue ranges are defined for OpenCV's 0-179 hue scale.

Saturation and value thresholds prevent false classifications

in near-black or near-white regions.

Returns a dict with keys:

warmth_score, coolness_score,

red_pct, blue_pct, green_pct, yellow_pct, white_pct, black_pct

"""

# Step 1: convert to HSV color space

img_hsv = cv2.cvtColor(img_rgb, cv2.COLOR_RGB2HSV)

h = img_hsv[:, :, 0] # hue: 0-179

s = img_hsv[:, :, 1] # saturation: 0-255

v = img_hsv[:, :, 2] # value: 0-255

total_pixels = img_rgb.shape[0] * img_rgb.shape[1]

# Step 2: chromatic mask (exclude near-black and near-white)

# A pixel is "chromatic" if it has meaningful saturation and is not

# too dark. Near-white pixels (high V, low S) are excluded by the

# saturation threshold alone; we do NOT cap V because pure saturated

# colors like RGB(255,0,0) have V=255 and must be counted.

chromatic = (s > 25) & (v > 40)

n_chromatic = max(chromatic.sum(), 1) # avoid division by zero

# Step 3: classify hues into warm and cool families

# Warm: reds (wrapping around 0/179), oranges, yellows (H < 30 or H > 160)

# Cool: greens and blues (H between 35 and 140). We start at 35 rather

# than higher to capture dark greens (like Pakistan's or Norfolk Island's)

# whose hue sits around H=70-75 in OpenCV's scale.

warm_mask = ((h <= 30) | (h >= 160)) & chromatic

cool_mask = ((h >= 35) & (h <= 140)) & chromatic

# Step 4: individual color masks with tighter thresholds

# Red wraps around: H <= 10 OR H >= 170, plus strong saturation and brightness

red_mask = ((h <= 10) | (h >= 170)) & (s > 80) & (v > 50)

# Orange occupies a narrow hue band between red and yellow

# (included in warmth but not tracked separately)

# Yellow: H 20-35, must be bright and saturated to distinguish from brown

yellow_mask = ((h >= 20) & (h <= 35)) & (s > 60) & (v > 100)

# Green: H 35-85, moderate saturation minimum

green_mask = ((h >= 35) & (h <= 85)) & (s > 40) & (v > 40)

# Blue: H 85-135, moderate saturation minimum

blue_mask = ((h >= 85) & (h <= 135)) & (s > 40) & (v > 40)

# Black: very low brightness regardless of hue

black_mask = (v < 40)

# White: very low saturation AND very high brightness

white_mask = (s <= 20) & (v >= 230)

return {

"warmth_score": round(warm_mask.sum() / n_chromatic, 4),

"coolness_score": round(cool_mask.sum() / n_chromatic, 4),

"red_pct": round(red_mask.sum() / total_pixels, 4),

"blue_pct": round(blue_mask.sum() / total_pixels, 4),

"green_pct": round(green_mask.sum() / total_pixels, 4),

"yellow_pct": round(yellow_mask.sum() / total_pixels, 4),

"white_pct": round(white_mask.sum() / total_pixels, 4),

"black_pct": round(black_mask.sum() / total_pixels, 4),

}

```

### Extraction

We iterate over every flag in our corpus, rasterize it, and compute the eight color palette metrics. The result is a DataFrame where each row is a flag and each column is a metric.

```{python}

#| label: extract-color-palette

#| code-summary: "Run color palette extraction on all flags"

records = []

for _, row in df_index.iterrows():

svg_path = flag_dir / f"{row['code']}.svg"

if not svg_path.exists():

continue

img = rasterize_flag(svg_path)

metrics = {"code": row["code"], "name": row["name"]}

metrics.update(compute_color_palette(img))

records.append(metrics)

df_palette = pd.DataFrame(records)

print(f"Color palette extracted: {df_palette.shape[0]} flags x {df_palette.shape[1]} columns")

itshow(df_palette, lengthMenu=[5, 10, 25, 50], pageLength=5)

```

### Color Landscape Overview

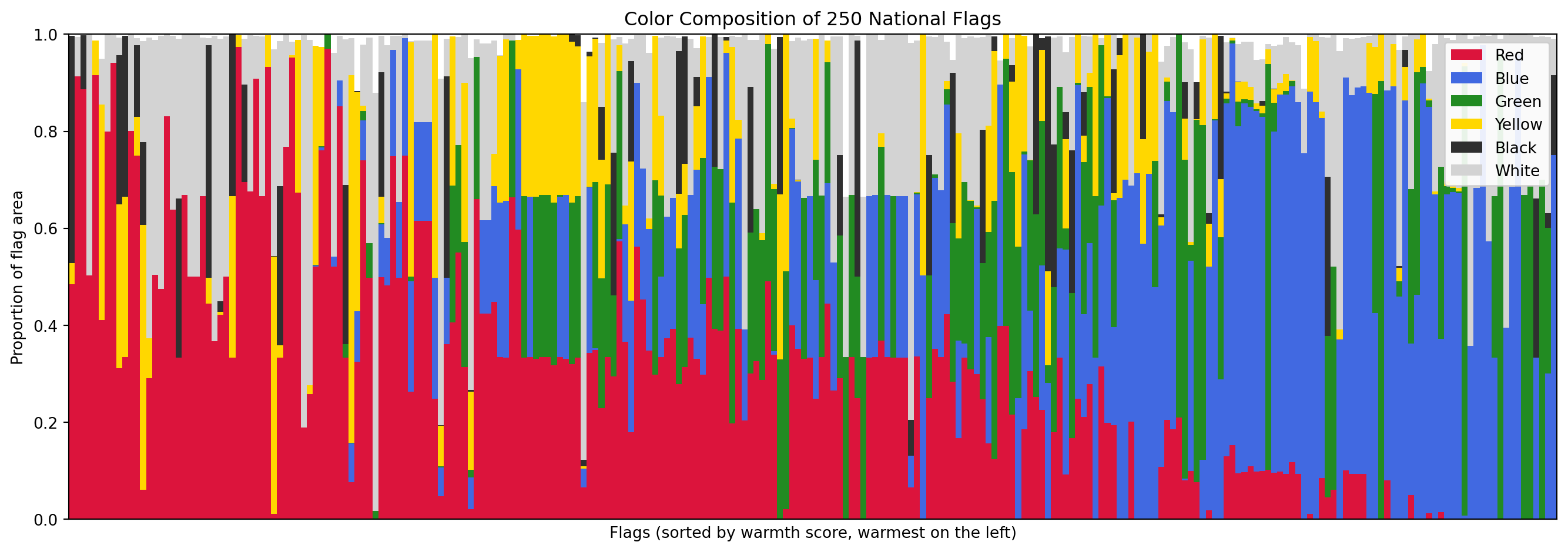

Before looking at individual metrics, let's get an overview of the entire color landscape. The stacked bar chart below shows the six major color proportions for every flag, sorted from warmest to coolest. Each vertical sliver is one flag; the height of each color band shows how much of the flag's area that color occupies.

```{python}

#| label: color-palette-stacked

#| code-summary: "Stacked bar chart of color composition across all flags"

#| fig-cap: "Color composition of all 250 flags, sorted by warmth score. Each vertical bar is one flag. The six bands show the proportion of the flag's area occupied by each major color."

color_cols = ["red_pct", "blue_pct", "green_pct", "yellow_pct", "black_pct", "white_pct"]

palette_colors = {

"red_pct": "#DC143C", "blue_pct": "#4169E1", "green_pct": "#228B22",

"yellow_pct": "#FFD700", "black_pct": "#2F2F2F", "white_pct": "#D3D3D3"

}

df_sorted = df_palette.sort_values("warmth_score", ascending=False).reset_index(drop=True)

fig, ax = plt.subplots(figsize=(14, 5))

bottom = np.zeros(len(df_sorted))

for col in color_cols:

ax.bar(range(len(df_sorted)), df_sorted[col], bottom=bottom,

color=palette_colors[col], label=col.replace("_pct", "").title(), width=1.0)

bottom += df_sorted[col].values

ax.set_xlabel("Flags (sorted by warmth score, warmest on the left)")

ax.set_ylabel("Proportion of flag area")

ax.set_title("Color Composition of 250 National Flags")

ax.legend(loc="upper right", framealpha=0.9)

ax.set_xlim(-0.5, len(df_sorted) - 0.5)

ax.set_ylim(0, 1)

ax.set_xticks([])

plt.tight_layout()

plt.show()

```

### Summary Statistics

The table below shows the distribution of each color palette metric across all 250 flags. Pay attention to the means and the spread (std): they tell us which colors dominate the world's flags on average and how much variation exists.

```{python}

#| label: color-palette-stats

#| code-summary: "Descriptive statistics for all 8 color palette metrics"

palette_metrics = ["warmth_score", "coolness_score", "red_pct", "blue_pct",

"green_pct", "yellow_pct", "white_pct", "black_pct"]

df_palette[palette_metrics].describe().round(4)

```

### Warmth vs Coolness

The warmth and coolness scores partition the chromatic content of each flag into two opposing camps. Since a pixel can be warm, cool, or neither (e.g., purple, which sits between red and blue), these two scores do not necessarily sum to 1. The scatter plot below shows each flag as a point in warmth-coolness space, with its dominant color indicated by marker color.

```{python}

#| label: warmth-vs-coolness

#| code-summary: "Interactive scatter plot of warmth vs coolness for every flag"

#| fig-cap: "Each dot is a flag. Flags in the top-left are dominated by warm colors (reds, oranges, yellows). Flags in the bottom-right are cool (blues, greens). Flags near the origin have mostly achromatic palettes (white, black)."

# Determine the dominant color for each flag (for marker coloring)

dom_cols = ["red_pct", "blue_pct", "green_pct", "yellow_pct", "white_pct", "black_pct"]

dom_labels = {

"red_pct": "Red", "blue_pct": "Blue", "green_pct": "Green",

"yellow_pct": "Yellow", "white_pct": "White", "black_pct": "Black"

}

dom_color_scale = {

"Red": "#DC143C", "Blue": "#4169E1", "Green": "#228B22",

"Yellow": "#FFD700", "White": "#999999", "Black": "#2F2F2F"

}

df_warmth_plot = df_palette.copy()

df_warmth_plot["dominant_color"] = df_palette[dom_cols].idxmax(axis=1).map(dom_labels)

fig = px.scatter(

df_warmth_plot, x="warmth_score", y="coolness_score",

color="dominant_color",

color_discrete_map=dom_color_scale,

hover_name="name",

hover_data={"warmth_score": ":.2f", "coolness_score": ":.2f", "dominant_color": True},

labels={"warmth_score": "Warmth Score", "coolness_score": "Coolness Score",

"dominant_color": "Dominant Color"},

title="Flag Color Temperature: Warmth vs Coolness",

opacity=0.75, width=800, height=600,

)

# Diagonal reference line (warm = cool)

fig.add_shape(type="line", x0=0, y0=1, x1=1, y1=0,

line=dict(color="#ccc", width=1, dash="dash"))

fig.update_layout(xaxis_range=[-0.05, 1.05], yaxis_range=[-0.05, 1.05])

fig.show()

```

### Which Colors Dominate?

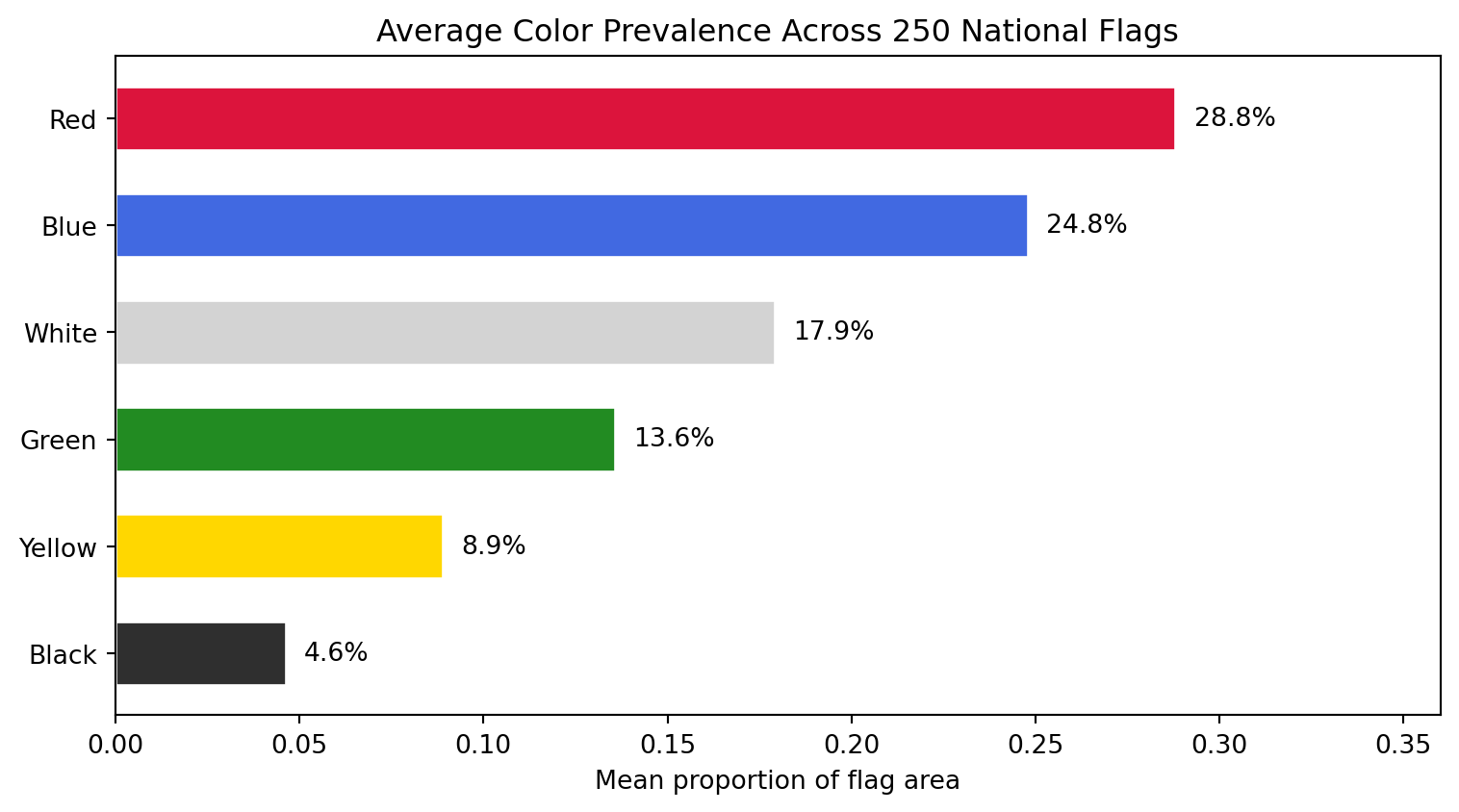

The bar chart below aggregates: for each of the six major colors, what is the *average* proportion across all 250 flags? This tells us the "global average flag" color recipe.

```{python}

#| label: color-prevalence

#| code-summary: "Average color prevalence across all 250 flags"

#| fig-cap: "Mean proportion of each color across all 250 flags. Red and white dominate, followed by blue. Yellow and green are less common, and black is the rarest major color."

mean_colors = df_palette[color_cols].mean().sort_values(ascending=True)

color_labels = [c.replace("_pct", "").title() for c in mean_colors.index]

bar_colors = [palette_colors[c] for c in mean_colors.index]

fig, ax = plt.subplots(figsize=(8, 4.5))

bars = ax.barh(color_labels, mean_colors.values, color=bar_colors, edgecolor="white", height=0.6)

# Add percentage labels on each bar

for bar, val in zip(bars, mean_colors.values):

ax.text(bar.get_width() + 0.005, bar.get_y() + bar.get_height() / 2,

f"{val:.1%}", va="center", fontsize=10)

ax.set_xlabel("Mean proportion of flag area")

ax.set_title("Average Color Prevalence Across 250 National Flags")

ax.set_xlim(0, mean_colors.max() * 1.25)

plt.tight_layout()

plt.show()

```

### The Warmest and Coolest Flags

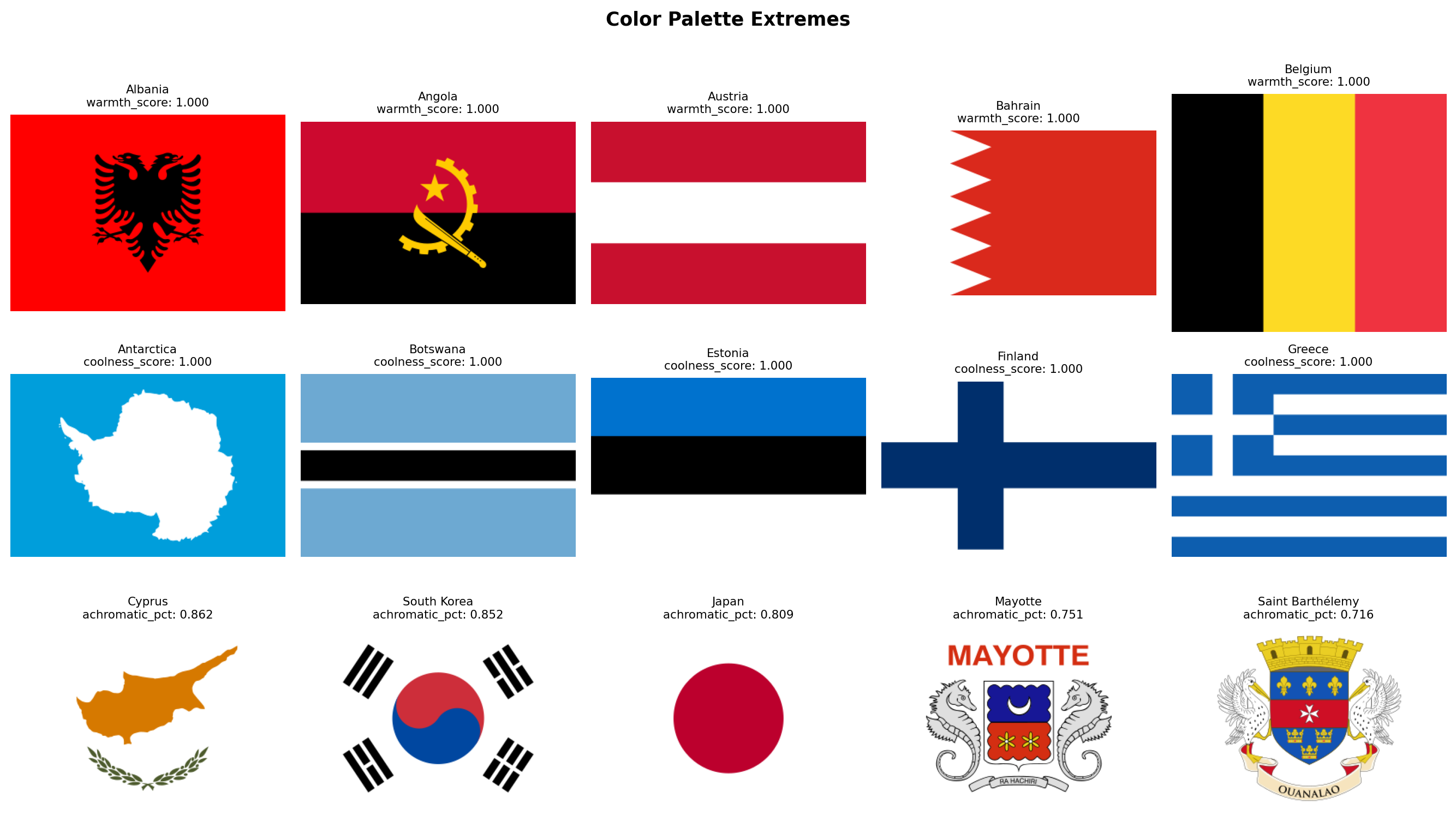

Finally, let's look at the actual flags sitting at the extremes. Which flags are the most dominated by warm colors? Which are the coolest? And which flags have almost no chromatic content at all (dominated by white and black)?

```{python}

#| label: extreme-warmth-coolness

#| code-summary: "Display the warmest, coolest, and most achromatic flags"

#| fig-cap: "Top row: the 5 flags with the highest warmth scores. Middle row: the 5 flags with the highest coolness scores. Bottom row: the 5 flags with the highest combined white + black area (most achromatic)."

# Achromatic dominance: how much of the flag is white + black (non-chromatic)

df_palette["achromatic_pct"] = df_palette["white_pct"] + df_palette["black_pct"]

groups = [

("warmth_score", True, "Warmest Flags"),

("coolness_score", True, "Coolest Flags"),

("achromatic_pct", True, "Most Achromatic Flags"),

]

fig, axes = plt.subplots(3, 5, figsize=(14, 8))

for row_idx, (metric, largest, title) in enumerate(groups):

subset = df_palette.nlargest(5, metric) if largest else df_palette.nsmallest(5, metric)

for col_idx, (_, flag_row) in enumerate(subset.iterrows()):

ax = axes[row_idx, col_idx]

img = rasterize_flag(flag_dir / f"{flag_row['code']}.svg", width=320)

ax.set_facecolor("#f0f0f0")

ax.imshow(img, aspect="equal")

score = flag_row[metric]

ax.set_title(f"{flag_row['name']}\n{metric}: {score:.3f}", fontsize=8)

ax.axis("off")

axes[row_idx, 0].set_ylabel(title, fontsize=9, rotation=0, labelpad=70, va="center")

plt.suptitle("Color Palette Extremes", fontsize=13, fontweight="bold", y=1.01)

plt.tight_layout()

plt.show()

```

### Discussion

Several patterns stand out from the data.

**Red is the world's flag color.** With a mean of 28.8% of flag area, red leads every other color. 39 flags dedicate more than half their area to red, led by China (97.4%), Morocco (97.0%), and Turkey (94.1%). This is consistent with heraldic tradition, where *gules* (red) is the most popular tincture, and with the cross-cultural associations of red: blood, courage, revolution, and sacrifice.

**Blue is close behind, but distributed differently.** Blue averages 24.8%, nearly tied with red, but it behaves differently. 108 flags (43% of the corpus) contain essentially zero blue, while 49 flags are more than half blue. Blue appears in an all-or-nothing pattern: when a flag uses blue, it tends to use a *lot* of it. The blue-dominant list reads like a map of the Pacific Ocean (Micronesia, Palau, Nauru, Australia, New Zealand) plus the British Ensign family (flags with Union Jacks on blue fields).

**White is a supporting color, not a leading one.** At 17.9%, white is the third most common color on average, but only 15 flags are majority-white. The white-dominant flags are revealing: Cyprus, Japan, South Korea, Israel, and Georgia are all flags with a simple symbol on a plain white field. White functions less as a "color" and more as negative space.

**Green clusters in specific traditions.** Green averages 13.6%, and 145 flags (58%) use essentially no green at all. But the green-dominant flags tell a clear story: Saudi Arabia (95.9%), Turkmenistan (83.7%), Bangladesh (79.0%), and Pakistan (68.8%) all belong to the Islamic design tradition. Brazil (69.1%) and Nigeria (66.9%) represent the Pan-African and Latin American strands respectively. Green is the most "culturally loaded" color in the corpus.

**Yellow and black are rare and specialized.** Yellow averages only 8.9%, and only 4 flags dedicate more than half their area to it. Black averages 4.6%, and no flag in the world is majority-black (Libya comes closest at 48.7%, followed by Papua New Guinea at 48.0%). Black appears most prominently in the Pan-African tricolor tradition (black-red-green or black-yellow-green) and in European tribands (Germany, Belgium).

**The warm-cool balance is remarkably even.** Mean warmth (0.515) and mean coolness (0.484) are nearly equal, suggesting that the world's flags, taken as a whole, are chromatically balanced. However, the distribution is bimodal rather than normal: 45 flags are purely warm (warmth > 0.95) and 22 are purely cool (coolness > 0.95), while 171 flags (68%) mix both warm and cool hues. Very few flags are chromatically neutral.

**The Solar Determinism hypothesis has an early lead.** The purely warm flags include many equatorial and tropical nations (Vietnam, Turkey, Morocco, China, Indonesia, Kyrgyzstan), while the purely cool flags include Scandinavian (Finland, Iceland), maritime (Micronesia, Palau, Nauru), and temperate nations (Estonia, Greece, Israel). This is suggestive, but not yet conclusive. We will need geographic coordinates and a proper statistical test to evaluate this hypothesis rigorously in the hypothesis testing section.

**A note on coverage.** Our six named color categories (red, blue, green, yellow, white, black) collectively account for 98.6% of all pixels across 250 flags. The remaining 1.4% falls into transitional regions that no single category claims: orange (H 11-19 in OpenCV, straddling red and yellow), muted tones produced by anti-aliasing at stripe boundaries, grays that are too saturated for the white mask but too desaturated for any chromatic mask, and the occasional purple. Ireland and Ivory Coast are the most affected: their orange stripes occupy a third of the flag area and are not counted by any individual color percentage. Crucially, these pixels are *not* lost to the analysis, the `warmth_score` and `coolness_score` metrics do cover the full chromatic range, as do the K-Means-based metrics in Family 2. The gap is confined to the six named-color breakdowns, which are intentionally strict to avoid false positives.

With the color palette extracted and explored, we have a clear picture of **what colors** each flag uses and how the world's flags distribute across the warm-cool spectrum. In the next section, we move to **Color Complexity**: not just *which* colors a flag uses, but *how many* and *how contrastingly*.

## Color Complexity

Family 1 told us *which* colors appear in each flag. Family 2 asks a different question: **how chromatically complex** is the flag's palette, and **how much do its colors contrast** with each other?

This family operationalizes two of NAVA's five principles. Principle 3 says *"Use two or three basic colors from the standard color set."* We can now test that rule quantitatively: do most flags actually use 2-3 colors, or is there a long tail of complex palettes? Principle 1 says *"Keep it simple"*, and a flag with high color contrast between its dominant blocks is visually simpler (more readable at a distance) than one where colors blur together.

We also introduce the **aggression index**, a compound metric that combines the area of red and black pixels. These two colors carry the heaviest symbolic weight in flag design: red for blood and revolution, black for mourning, resistance, and Pan-African identity. Their combined area gives us a single number to test the **Revolutionary Diagonal** hypothesis later.

**How it works, step by step:**

1. **Color quantization with K-Means.** We reduce each flag's millions of possible RGB values to a small set of representative colors by running K-Means clustering with `k=8` in RGB space. After clustering, we discard clusters that represent less than 1.5% of the total area (noise, anti-aliasing artifacts). We chose 1.5% rather than a higher threshold because some flags have small but important symbols, China's yellow stars, for instance, occupy only about 2.5% of the flag area. The number of surviving clusters is `palette_complexity`. Note that this is *not* the number of colors a human would name when looking at the flag. A human sees Afghanistan as a 4-color flag (black, red, green, white), but the emblem's fine artwork introduces brown, gold, and intermediate shades that push the pixel-level count to 7. This is intentional: `palette_complexity` measures how chromatically varied the design actually is, not how many colors appear in the official specification.

2. **Perceptual color contrast.** For each pair of dominant color clusters, we convert from RGB to the CIELAB color space and compute the CIEDE2000 color difference ($\Delta E_{00}$). Unlike luminance-only measures (such as the WCAG contrast ratio), CIEDE2000 accounts for differences in hue and saturation as well as lightness, so two colors that are equally dark but very different in hue, such as red and green, correctly score as highly contrastive. We report the maximum $\Delta E_{00}$ across all pairs of dominant colors. Values typically range from 0 (identical colors) to approximately 100 (black vs white), though maximally different chromatic pairs can slightly exceed 100.

3. **Aggression index.** Simply the sum of `red_pct` and `black_pct` from Family 1. We compute it here rather than deriving it later so that Family 2's DataFrame is self-contained.

```{python}

#| label: color-complexity-fn

#| code-summary: "Color complexity extraction function"

from sklearn.cluster import MiniBatchKMeans

from skimage.color import rgb2lab, deltaE_ciede2000

def compute_color_complexity(img_rgb, red_pct, black_pct):

"""Extract 3 color complexity metrics from an RGB flag image.

Parameters

----------

img_rgb : np.ndarray

RGB image array of shape (H, W, 3).

red_pct : float

Pre-computed red area fraction from Family 1 (avoids recomputation).

black_pct : float

Pre-computed black area fraction from Family 1.

Returns

-------

dict with keys: palette_complexity, color_contrast, aggression_index

"""

# Step 1: flatten pixels and run K-Means with k=8

pixels = img_rgb.reshape(-1, 3).astype(np.float64)

kmeans = MiniBatchKMeans(n_clusters=8, random_state=42, n_init=3, batch_size=1024)

labels = kmeans.fit_predict(pixels)

centers = kmeans.cluster_centers_ # shape (8, 3)

# Compute the proportion of pixels in each cluster

total = len(labels)

proportions = np.array([(labels == i).sum() / total for i in range(8)])

# Step 2: keep only clusters above the 1.5% noise threshold.

# We use 1.5% rather than a higher cutoff because some flags have

# small but visually important symbols (e.g., China's yellow stars

# occupy ~2.5% of the flag area, and Micronesia's white stars ~2.4%).

# A threshold of 3% would erase these, collapsing the flag to 1 color.

significant = proportions >= 0.015

n_distinct = int(significant.sum())

sig_centers = centers[significant]

sig_proportions = proportions[significant]

# Step 3: compute maximum perceptual color distance (CIEDE2000)

# We convert each dominant color to CIELAB and compute delta-E between all

# pairs. Unlike WCAG luminance contrast, CIEDE2000 captures differences in

# hue and saturation as well as lightness, so red vs green (both dark)

# correctly scores as highly contrastive.

max_contrast = 0.0

if len(sig_centers) >= 2:

# rgb2lab expects (H, W, 3) float64 in [0, 1]

lab_colors = rgb2lab(sig_centers.reshape(1, -1, 3) / 255.0)[0] # shape (N, 3)

for i in range(len(lab_colors)):

for j in range(i + 1, len(lab_colors)):

de = deltaE_ciede2000(

lab_colors[i].reshape(1, 1, 3),

lab_colors[j].reshape(1, 1, 3),

)[0, 0]

if de > max_contrast:

max_contrast = de

# Step 4: aggression index = red + black area from Family 1

aggression = round(red_pct + black_pct, 4)

return {

"palette_complexity": n_distinct,

"color_contrast": round(max_contrast, 2),

"aggression_index": aggression,

}

```

### Extraction

We now run the extraction loop. Since the aggression index reuses `red_pct` and `black_pct` from Family 1, we pull those values from `df_palette` rather than recomputing them.

```{python}

#| label: extract-color-complexity

#| code-summary: "Run color complexity extraction on all flags"

records_complexity = []

for _, row in df_palette.iterrows():

svg_path = flag_dir / f"{row['code']}.svg"

if not svg_path.exists():

continue

img = rasterize_flag(svg_path)

metrics = {"code": row["code"], "name": row["name"]}

metrics.update(compute_color_complexity(img, row["red_pct"], row["black_pct"]))

records_complexity.append(metrics)

df_complexity = pd.DataFrame(records_complexity)

print(f"Color complexity extracted: {df_complexity.shape[0]} flags x {df_complexity.shape[1]} columns")

itshow(df_complexity, lengthMenu=[5, 10, 25, 50], pageLength=5)

```

### Palette Complexity

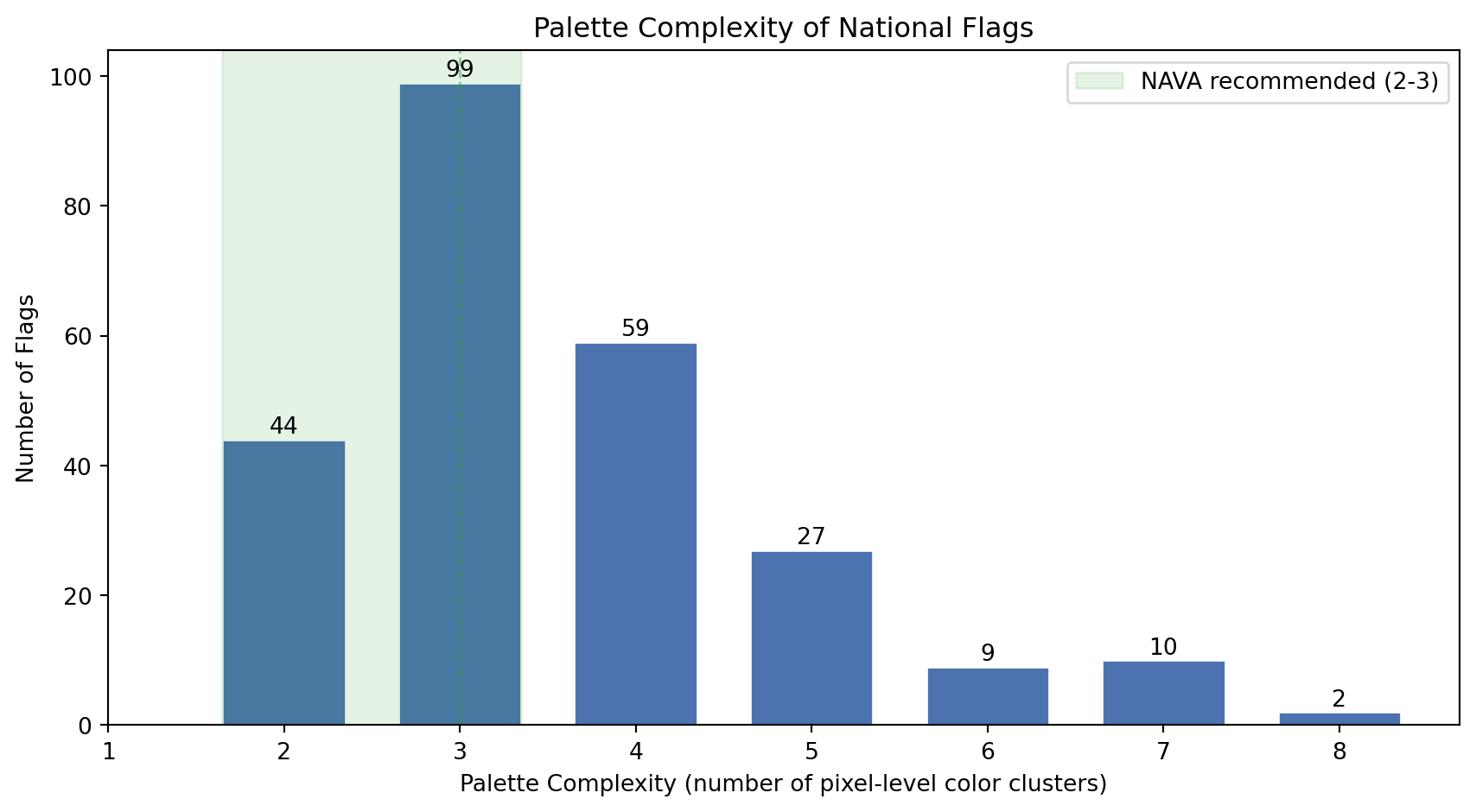

NAVA's third principle recommends 2-3 colors. Our `palette_complexity` metric captures something broader: the number of distinct color clusters at the pixel level, including shading and emblem detail. Let's see how the world's flags distribute on this scale.

```{python}

#| label: palette-complexity-histogram

#| code-summary: "Distribution of palette complexity across all flags"

#| fig-cap: "Distribution of palette complexity (number of significant pixel-level color clusters) across all 250 flags. The dashed green band marks NAVA's recommended range of 2-3 colors. Flags with detailed emblems or coats of arms push the count above 5."

fig, ax = plt.subplots(figsize=(9, 5))

counts = df_complexity["palette_complexity"].value_counts().sort_index()

bars = ax.bar(counts.index, counts.values, color="#4C72B0", edgecolor="white", width=0.7)

# Highlight NAVA's recommended zone (2-3 colors)

ax.axvspan(1.65, 3.35, alpha=0.12, color="#2ca02c", label="NAVA recommended (2-3)")

ax.axvline(3, color="#2ca02c", linestyle="--", linewidth=1, alpha=0.5)

# Label each bar with its count

for bar in bars:

height = bar.get_height()

ax.text(bar.get_x() + bar.get_width() / 2, height + 1,

str(int(height)), ha="center", fontsize=10)

ax.set_xlabel("Palette Complexity (number of pixel-level color clusters)")

ax.set_ylabel("Number of Flags")

ax.set_title("Palette Complexity of National Flags")

ax.legend(loc="upper right")

ax.set_xticks(range(1, 9))

plt.tight_layout()

plt.show()

```

```{python}

#| label: color-count-stats

#| code-summary: "NAVA compliance statistics"

n = len(df_complexity)

n_nava = ((df_complexity["palette_complexity"] >= 2) & (df_complexity["palette_complexity"] <= 3)).sum()

n_under = (df_complexity["palette_complexity"] < 2).sum()

n_over = (df_complexity["palette_complexity"] > 3).sum()

median_colors = df_complexity["palette_complexity"].median()

mean_colors = df_complexity["palette_complexity"].mean()

print(f"NAVA-compliant (2-3 colors): {n_nava} flags ({n_nava/n:.0%})")

print(f"Fewer than 2 colors: {n_under} flags")

print(f"More than 3 colors: {n_over} flags ({n_over/n:.0%})")

print(f"Median colors: {median_colors:.0f}")

print(f"Mean colors: {mean_colors:.1f}")

```

The numbers tell us something interesting: while NAVA recommends 2-3 colors, `palette_complexity` often exceeds that range. This does not mean most flags are badly designed. It reflects the gap between official color specifications and pixel-level reality. Afghanistan officially has 4 colors, but its emblem renders as 7 pixel clusters. A clean tricolor like France scores exactly 3. The metric correctly separates *geometrically simple* designs from *artistically detailed* ones, which is precisely the dimension we want to capture.

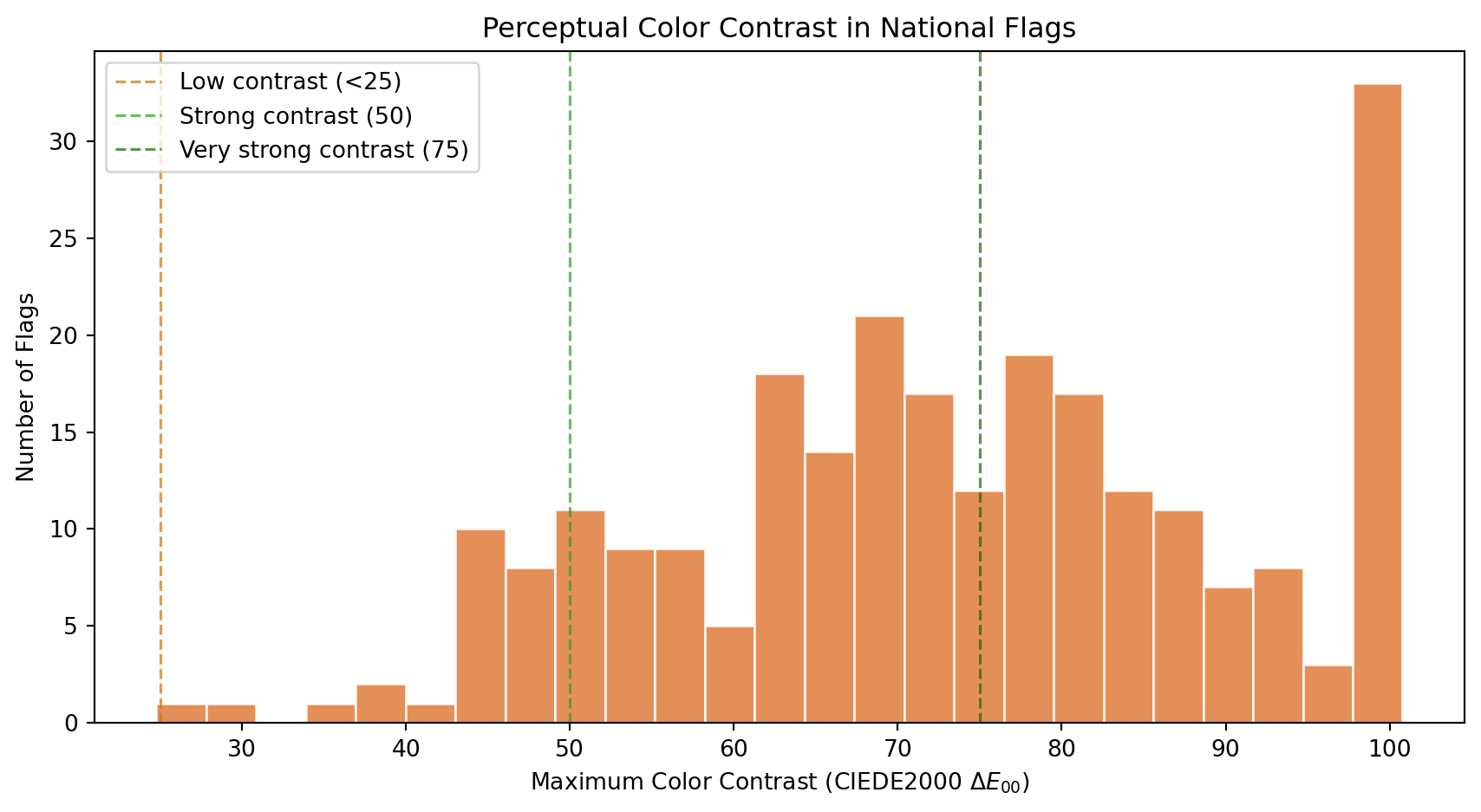

### Color Contrast

How different are a flag's dominant colors from each other? High contrast (e.g., white on black, red on green, blue on yellow) makes a flag readable from far away, which is the original functional purpose of a flag: identification at a distance on a battlefield or a ship. Low contrast suggests a monochromatic or analogous palette that prioritizes subtlety over raw visibility.

We measure contrast using **CIEDE2000** ($\Delta E_{00}$), the international standard for perceptual color difference. Unlike luminance-only measures (such as the WCAG contrast ratio, which only captures lightness differences), CIEDE2000 operates in the CIELAB color space and accounts for hue and saturation as well as lightness. This means red vs dark green, two colors with nearly identical luminance but very different hues, correctly scores as highly contrastive.

```{python}

#| label: contrast-histogram

#| code-summary: "Distribution of maximum perceptual color contrast"

#| fig-cap: "Distribution of the maximum CIEDE2000 color difference between any two dominant colors. A value of 0 means all dominant colors are perceptually identical. Pure black vs white scores approximately 100, though maximally different chromatic pairs (like deep blue vs vivid yellow) can slightly exceed that. Values above 50 indicate strongly distinct palettes."

fig, ax = plt.subplots(figsize=(9, 5))

ax.hist(df_complexity["color_contrast"], bins=25, color="#E07B39",

edgecolor="white", alpha=0.85)

# Perceptual distance reference thresholds

ax.axvline(25, color="#cc7700", linestyle="--", linewidth=1.2, alpha=0.7, label="Low contrast (<25)")

ax.axvline(50, color="#2ca02c", linestyle="--", linewidth=1.2, alpha=0.7, label="Strong contrast (50)")

ax.axvline(75, color="#1a6600", linestyle="--", linewidth=1.2, alpha=0.7, label="Very strong contrast (75)")

ax.set_xlabel("Maximum Color Contrast (CIEDE2000 $\Delta E_{00}$)")

ax.set_ylabel("Number of Flags")

ax.set_title("Perceptual Color Contrast in National Flags")

ax.legend(loc="upper left")

plt.tight_layout()

plt.show()

```

```{python}

#| label: contrast-stats

#| code-summary: "Perceptual contrast statistics"

high_contrast = (df_complexity["color_contrast"] >= 75).sum()

strong_contrast = ((df_complexity["color_contrast"] >= 50) & (df_complexity["color_contrast"] < 75)).sum()

moderate_contrast = ((df_complexity["color_contrast"] >= 25) & (df_complexity["color_contrast"] < 50)).sum()

low_contrast = (df_complexity["color_contrast"] < 25).sum()

print(f"Very strong contrast (>= 75): {high_contrast} flags ({high_contrast/n:.0%})")

print(f"Strong contrast (50-75): {strong_contrast} flags ({strong_contrast/n:.0%})")

print(f"Moderate contrast (25-50): {moderate_contrast} flags ({moderate_contrast/n:.0%})")

print(f"Low contrast (< 25): {low_contrast} flags ({low_contrast/n:.0%})")

print(f"Mean color contrast: {df_complexity['color_contrast'].mean():.1f}")

print(f"Median color contrast: {df_complexity['color_contrast'].median():.1f}")

```

Flag designers are instinctive contrast engineers. The distribution reveals that most national flags achieve strong perceptual separation between their dominant colors, which makes sense: a flag that cannot be distinguished at 200 meters fails its primary purpose.

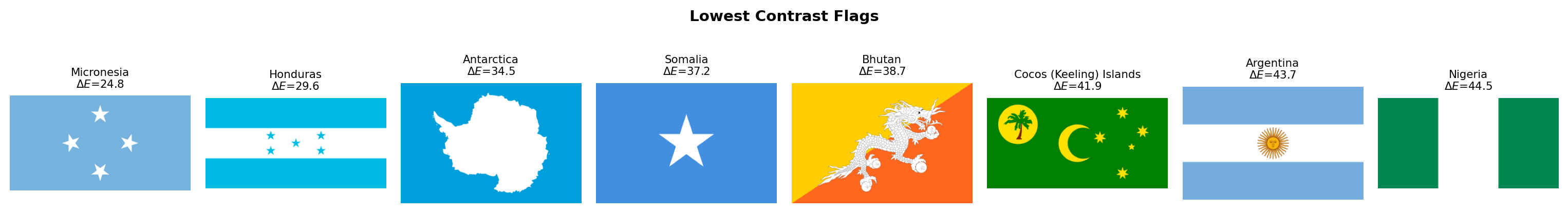

The few flags with low contrast deserve individual attention. Let's see which they are:

```{python}

#| label: lowest-contrast-flags

#| code-summary: "Flags with the lowest perceptual color contrast"

#| fig-cap: "The 8 flags with the lowest CIEDE2000 color contrast. These designs use colors that are perceptually close to each other, whether through similar hues, similar lightness, or both."

low_df = df_complexity.nsmallest(8, "color_contrast")

fig, axes = plt.subplots(1, 8, figsize=(16, 2.5))

for ax, (_, row) in zip(axes, low_df.iterrows()):

img = rasterize_flag(flag_dir / f"{row['code']}.svg", width=320)

ax.set_facecolor("#f0f0f0")

ax.imshow(img, aspect="equal")

ax.set_title(f"{row['name']}\n$\Delta E$={row['color_contrast']:.1f}", fontsize=8)

ax.axis("off")

plt.suptitle("Lowest Contrast Flags", fontsize=11, fontweight="bold")

plt.tight_layout()

plt.show()

```

### The Aggression Index

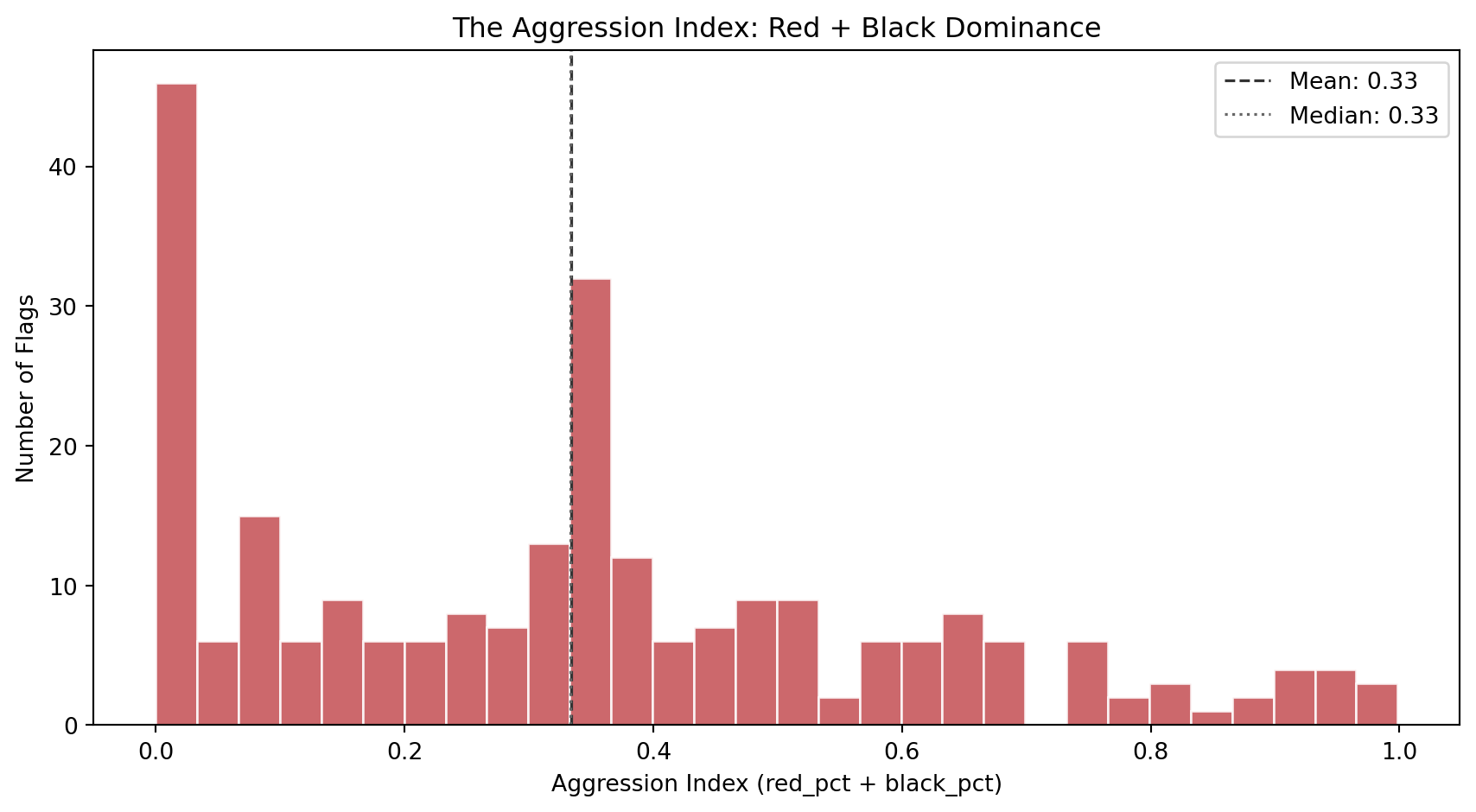

The aggression index combines the two most symbolically intense colors in flag design: red (blood, revolution, sacrifice) and black (mourning, resistance, heritage). A high aggression index does not literally mean the country is aggressive. It captures a specific *aesthetic posture*: the visual weight of colors historically associated with struggle and defiance.

```{python}

#| label: aggression-histogram

#| code-summary: "Distribution of the aggression index (red + black area)"

#| fig-cap: "Distribution of the aggression index across 250 flags. The index ranges from 0 (no red or black at all) to nearly 1 (almost entirely red and black). The bimodal shape suggests two design populations: flags that avoid red/black entirely, and flags that lean heavily into them."

fig, ax = plt.subplots(figsize=(9, 5))

ax.hist(df_complexity["aggression_index"], bins=30, color="#C44E52",

edgecolor="white", alpha=0.85)

ax.axvline(df_complexity["aggression_index"].mean(), color="#333",

linestyle="--", linewidth=1.2, label=f"Mean: {df_complexity['aggression_index'].mean():.2f}")

ax.axvline(df_complexity["aggression_index"].median(), color="#666",

linestyle=":", linewidth=1.2, label=f"Median: {df_complexity['aggression_index'].median():.2f}")

ax.set_xlabel("Aggression Index (red_pct + black_pct)")

ax.set_ylabel("Number of Flags")

ax.set_title("The Aggression Index: Red + Black Dominance")

ax.legend(loc="upper right")

plt.tight_layout()

plt.show()

```

Which flags sit at the extremes? The strip below shows the 8 most aggressive and 8 most peaceful designs:

```{python}

#| label: aggression-extremes

#| code-summary: "Most and least aggressive flags by the aggression index"

#| fig-cap: "Top row: the 8 flags with the highest aggression index (most red + black). Bottom row: the 8 flags with the lowest aggression index (least red and black). The aggressive row reads like a list of revolution and resistance; the peaceful row is dominated by blue, green, and yellow palettes."

fig, axes = plt.subplots(2, 8, figsize=(18, 4.5))

for row_idx, (subset, title) in enumerate([

(df_complexity.nlargest(8, "aggression_index"), "Most Aggressive"),

(df_complexity.nsmallest(8, "aggression_index"), "Most Peaceful"),

]):

for col_idx, (_, flag_row) in enumerate(subset.iterrows()):

ax = axes[row_idx, col_idx]

img = rasterize_flag(flag_dir / f"{flag_row['code']}.svg", width=320)

ax.set_facecolor("#f0f0f0")

ax.imshow(img, aspect="equal")

ax.set_title(f"{flag_row['name']}\n{flag_row['aggression_index']:.2f}", fontsize=7)

ax.axis("off")

axes[row_idx, 0].set_ylabel(title, fontsize=9, rotation=0, labelpad=65, va="center")

plt.suptitle("Aggression Index Extremes", fontsize=12, fontweight="bold", y=1.01)

plt.tight_layout()

plt.show()

```

### Complexity vs Contrast

Do flags with more colors also tend to have higher contrast, or is there a trade-off? The scatter plot below maps each flag in the space of color count vs contrast ratio, with the aggression index encoded as marker color (red = high aggression, blue = low). This gives us a three-dimensional view of color complexity in a single chart.

```{python}

#| label: complexity-vs-contrast

#| code-summary: "Interactive scatter: color count vs contrast, colored by aggression"

#| fig-cap: "Each dot is a flag. X-axis: palette complexity (pixel-level color clusters). Y-axis: maximum perceptual color contrast. Color: aggression index (red = high, blue = low). Flags in the upper-right are both chromatically complex and high-contrast. Flags in the lower-left are simple and low-contrast."

fig = px.scatter(

df_complexity, x="palette_complexity", y="color_contrast",

color="aggression_index",

color_continuous_scale="RdYlBu_r",

range_color=[0, 1],

hover_name="name",

hover_data={"palette_complexity": True, "color_contrast": ":.1f",

"aggression_index": ":.2f"},

labels={"palette_complexity": "Palette Complexity",

"color_contrast": "Max Color Contrast (CIEDE2000 ΔE₀₀)",

"aggression_index": "Aggression Index"},

title="Palette Complexity vs Color Contrast",

opacity=0.75, width=800, height=600,

)

fig.update_layout(xaxis=dict(dtick=1))

fig.show()

```

### Warmth Meets Aggression

Before moving on, let's bridge Family 1 and Family 2 with one final visualization. Is there a relationship between a flag's color *temperature* (warmth score) and its *aggression* (red + black area)? Intuitively there should be: warm flags tend to be red, and red is a major component of the aggression index. But the relationship is not guaranteed to be linear, since a flag can be warm through yellow/orange rather than red, and aggression also includes black.

```{python}

#| label: warmth-vs-aggression

#| code-summary: "Interactive scatter connecting Family 1 (warmth) to Family 2 (aggression)"

#| fig-cap: "Each dot is a flag. X-axis: warmth score (Family 1). Y-axis: aggression index (Family 2). The positive correlation confirms that warm flags tend to be aggressive, but the scatter reveals many exceptions: warm-but-peaceful flags (orange/yellow dominance) and cool-but-aggressive flags (flags that combine blue with significant black areas)."

# Merge Family 1 and Family 2 for cross-referencing

df_cross = df_palette[["code", "name", "warmth_score"]].merge(

df_complexity[["code", "aggression_index", "color_contrast"]], on="code"

)

corr = df_cross["warmth_score"].corr(df_cross["aggression_index"])

fig = px.scatter(

df_cross, x="warmth_score", y="aggression_index",

color="color_contrast",

color_continuous_scale="viridis",

hover_name="name",

hover_data={"warmth_score": ":.2f", "aggression_index": ":.2f",

"color_contrast": ":.1f"},

labels={"warmth_score": "Warmth Score (Family 1)",

"aggression_index": "Aggression Index (Family 2)",

"color_contrast": "Color Contrast (ΔE₀₀)"},

title=f"Color Temperature vs Aggression (Pearson r = {corr:.3f})",

opacity=0.75, width=800, height=600,

)

fig.update_layout(xaxis_range=[-0.05, 1.05], yaxis_range=[-0.05, 1.05])

fig.show()

```

### Discussion

Several findings emerge from Family 2.

**Palette complexity captures more than the "official" color count.** A human looking at Afghanistan's flag sees 4 colors (black, red, green, white). Wikipedia's specification lists 6 (counting two shades of red and two shades of green in the emblem). Our metric finds 7, because the emblem's fine artwork, mosque, wheat wreath, Arabic script, introduces browns, golds, and intermediate shades at the pixel level. This gap between human perception, official specification, and pixel reality is the point. We deliberately named this metric `palette_complexity` rather than "number of colors" to signal that it measures **chromatic variety in the rendered image**, not the count a person would give. Flags with clean geometric designs (tricolors, bicolors) score 2-3. Flags with detailed coats of arms or multi-shade emblems score 5-7. This is exactly the dimension that NAVA's simplicity principle targets: a flag that a child can draw from memory will have low palette complexity, while one requiring an artist will score high.

**Flag designers are master contrast engineers.** When measured with CIEDE2000 (which captures hue and saturation differences, not just lightness), most flags show strong perceptual separation between their dominant colors. Red-and-green flags like Bangladesh and Maldives, which would score near 1:1 on a luminance-only scale, correctly register as highly contrastive here because red and green sit on opposite sides of the CIELAB color space. Centuries before color science formalized these distinctions, flag designers were already exploiting the full perceptual gamut to maximize visibility at a distance.

**The aggression index is bimodal.** Flags cluster into two groups: those that avoid red and black (many Islamic, Pacific, and blue-tradition flags), and those that lean into them (Pan-African, revolutionary, and European flags). The bimodal distribution is a first hint that flags do not occupy a single design continuum but fall into distinct stylistic traditions.

**Warmth and aggression are correlated but not identical.** Warm flags tend to be aggressive (high red content drives both metrics), but the scatter reveals a meaningful population of exceptions. Warm-but-peaceful flags use orange and yellow instead of red (e.g., some Asian flags with gold). Cool-but-aggressive flags combine blue with black (e.g., Estonia). These exceptions are exactly the kind of flags that will become interesting outliers in clustering.

With both the palette and complexity of each flag now quantified, we have 11 features (8 + 3) describing the *color* dimension of flag design. In the next section, we shift from color to **geometry**: how busy is the design, and what structural patterns define it?

## Visual Complexity

Families 1 and 2 described the *color* of each flag. Family 3 asks a different question: **how visually complex is the design itself?** A tricolor with three solid blocks of color is among the simplest possible flag designs. A flag featuring a detailed coat of arms, animals, weapons, text, and ornamental borders is visually complex. NAVA's first principle, *"Keep it simple: the flag should be so simple that a child can draw it from memory"*, and fourth principle, *"No lettering or seals"*, both relate directly to this dimension.

We measure complexity from three complementary angles, each capturing something the others miss:

1. **Visual entropy** (Shannon entropy of the grayscale histogram). This is an information-theoretic measure. A perfectly uniform image has zero entropy; a perfectly random image has maximum entropy. Flags with few distinct gray levels (solid stripes) score low; flags with many gray levels (gradients, shadows, fine artwork) score high.

2. **Edge density** (fraction of edge pixels detected by the Canny algorithm). This is a geometric measure. Every boundary between colors, every line in an emblem, every contour of a coat of arms contributes an edge pixel. A simple tricolor has edges only at the stripe boundaries; a flag with a detailed eagle emblem is dense with edges.

3. **Spatial entropy** (entropy of the color distribution across a 4x4 grid). This captures *where* the complexity lives. Two flags can have identical visual entropy, but one distributes its complexity evenly (like the USA's stars and stripes) while the other concentrates it in one spot (like Japan's red circle on white). Spatial entropy distinguishes these two cases.

```{python}

#| label: visual-complexity-fn

#| code-summary: "Visual complexity extraction function"

def compute_visual_complexity(img_rgb):

"""Extract 3 visual complexity metrics from an RGB flag image.

Parameters

----------

img_rgb : np.ndarray

RGB image array of shape (H, W, 3).

Returns

-------

dict with keys: visual_entropy, edge_density, spatial_entropy

"""

# Step 1: convert to grayscale for entropy and edge detection.

# We use OpenCV's standard weighted formula (0.299R + 0.587G + 0.114B)

# rather than a simple average, because it better matches human

# brightness perception.

gray = cv2.cvtColor(img_rgb, cv2.COLOR_RGB2GRAY)

# Step 2: visual entropy -- Shannon entropy of the grayscale histogram.

# We compute a 256-bin histogram (one bin per possible gray value),

# normalize it to a probability distribution, and compute entropy.

# The result is in bits. Maximum possible is log2(256) = 8.0 bits for

# a perfectly uniform histogram (every gray value equally likely).

hist, _ = np.histogram(gray.ravel(), bins=256, range=(0, 256))

hist_prob = hist / hist.sum()

vis_entropy = float(shannon_entropy(hist_prob, base=2))

# Step 3: edge density -- Canny edge fraction.

# Canny is a multi-stage edge detector: it smooths the image with a

# Gaussian, computes gradients, applies non-maximum suppression, and

# uses hysteresis thresholding. The sigma parameter controls the

# smoothing scale. We use sigma=1.0, a standard choice that balances

# noise rejection with detail preservation.

edges = canny(gray, sigma=1.0)

edge_dens = float(edges.sum() / edges.size)

# Step 4: spatial entropy -- how uniformly is color complexity distributed?

# We divide the flag into a 4x4 grid of cells (16 cells total).

# For each cell, we compute the mean RGB color, then measure how

# diverse these 16 mean colors are using Shannon entropy on the

# distribution of unique color clusters.

h, w = img_rgb.shape[:2]

rows, cols = 4, 4

cell_h, cell_w = h // rows, w // cols

# Collect the mean color of each grid cell

cell_colors = []

for r in range(rows):

for c in range(cols):

cell = img_rgb[r*cell_h:(r+1)*cell_h, c*cell_w:(c+1)*cell_w]

cell_colors.append(cell.mean(axis=(0, 1)))

cell_colors = np.array(cell_colors) # shape (16, 3)

# Quantize cell colors into a small number of bins by rounding

# each channel to the nearest 32 (gives 8 levels per channel).

# Then count how many cells share the same quantized color.

quantized = (cell_colors / 32).astype(int)

# Convert to hashable tuples for counting

color_tuples = [tuple(q) for q in quantized]

from collections import Counter

counts = Counter(color_tuples)

probs = np.array(list(counts.values())) / len(color_tuples)

spat_entropy = float(shannon_entropy(probs, base=2))

return {

"visual_entropy": round(vis_entropy, 4),

"edge_density": round(edge_dens, 4),

"spatial_entropy": round(spat_entropy, 4),

}

```

### Extraction

We run the visual complexity extraction across all flags. This family does not depend on any previous results, so we iterate directly over the country index.

```{python}

#| label: extract-visual-complexity

#| code-summary: "Run visual complexity extraction on all flags"

records_visual = []

for _, row in df_palette.iterrows():

svg_path = flag_dir / f"{row['code']}.svg"

if not svg_path.exists():

continue

img = rasterize_flag(svg_path)

metrics = {"code": row["code"], "name": row["name"]}

metrics.update(compute_visual_complexity(img))

records_visual.append(metrics)

df_visual = pd.DataFrame(records_visual)

print(f"Visual complexity extracted: {df_visual.shape[0]} flags x {df_visual.shape[1]} columns")

itshow(df_visual, lengthMenu=[5, 10, 25, 50], pageLength=5)

```

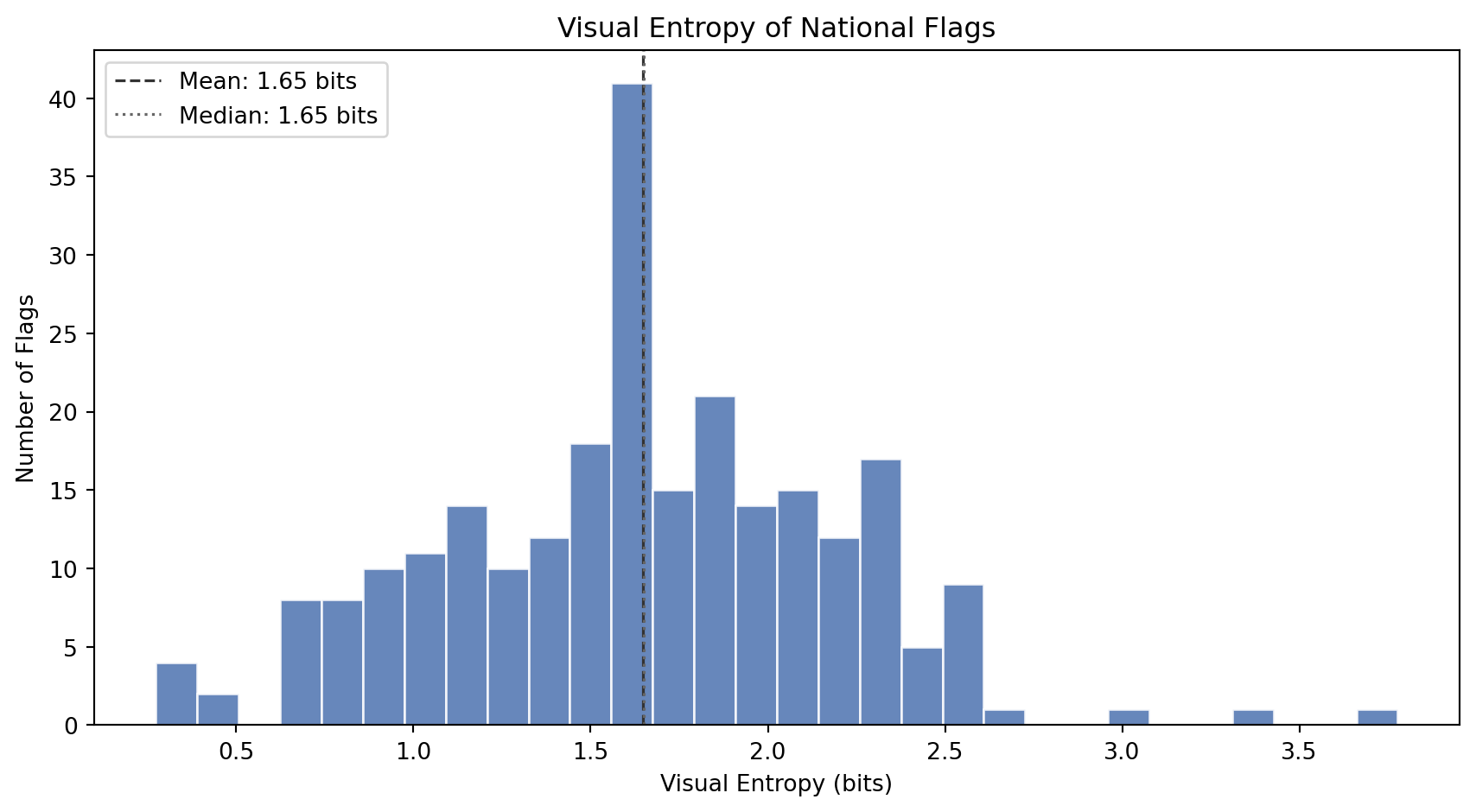

### Visual Entropy

How much *information* does a flag's grayscale image contain? A tricolor made of three solid blocks has very few distinct gray levels and low entropy. A flag with gradients, shading, emblem detail, and anti-aliased curves has many gray levels and high entropy.

```{python}

#| label: entropy-histogram

#| code-summary: "Distribution of visual entropy across flags"

#| fig-cap: "Distribution of visual entropy (Shannon entropy of the grayscale histogram, in bits). Low entropy means the flag has few distinct brightness levels (simple geometric designs). High entropy means the flag contains many brightness levels (detailed artwork, gradients, fine textures)."

fig, ax = plt.subplots(figsize=(9, 5))

ax.hist(df_visual["visual_entropy"], bins=30, color="#4C72B0",

edgecolor="white", alpha=0.85)

ax.axvline(df_visual["visual_entropy"].mean(), color="#333",

linestyle="--", linewidth=1.2,

label=f"Mean: {df_visual['visual_entropy'].mean():.2f} bits")

ax.axvline(df_visual["visual_entropy"].median(), color="#666",

linestyle=":", linewidth=1.2,

label=f"Median: {df_visual['visual_entropy'].median():.2f} bits")

ax.set_xlabel("Visual Entropy (bits)")

ax.set_ylabel("Number of Flags")

ax.set_title("Visual Entropy of National Flags")

ax.legend(loc="upper left")

plt.tight_layout()

plt.show()

```

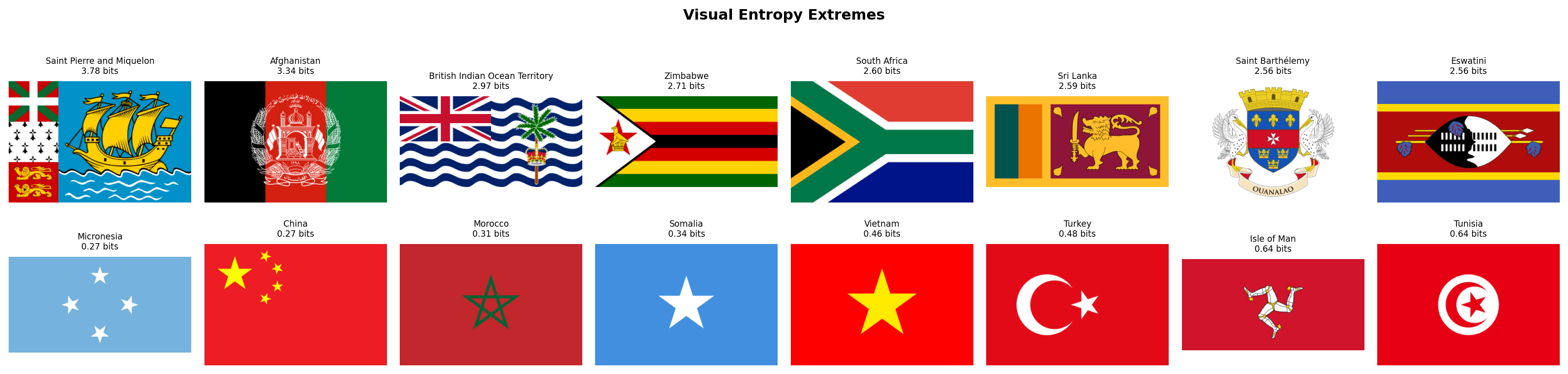

```{python}

#| label: entropy-extremes

#| code-summary: "Flags with the highest and lowest visual entropy"

#| fig-cap: "Top row: the 8 flags with the highest visual entropy (most information-rich grayscale profiles). These flags contain detailed coats of arms, complex heraldry, or multi-element designs. Bottom row: the 8 flags with the lowest entropy (simplest grayscale profiles). These are clean geometric designs with very few distinct brightness levels."

fig, axes = plt.subplots(2, 8, figsize=(18, 4.5))

for row_idx, (subset, title) in enumerate([

(df_visual.nlargest(8, "visual_entropy"), "Most Complex"),

(df_visual.nsmallest(8, "visual_entropy"), "Simplest"),

]):

for col_idx, (_, flag_row) in enumerate(subset.iterrows()):

ax = axes[row_idx, col_idx]

img = rasterize_flag(flag_dir / f"{flag_row['code']}.svg", width=320)

ax.set_facecolor("#f0f0f0")

ax.imshow(img, aspect="equal")

ax.set_title(f"{flag_row['name']}\n{flag_row['visual_entropy']:.2f} bits", fontsize=7)

ax.axis("off")

axes[row_idx, 0].set_ylabel(title, fontsize=9, rotation=0, labelpad=65, va="center")

plt.suptitle("Visual Entropy Extremes", fontsize=12, fontweight="bold", y=1.01)

plt.tight_layout()

plt.show()

```

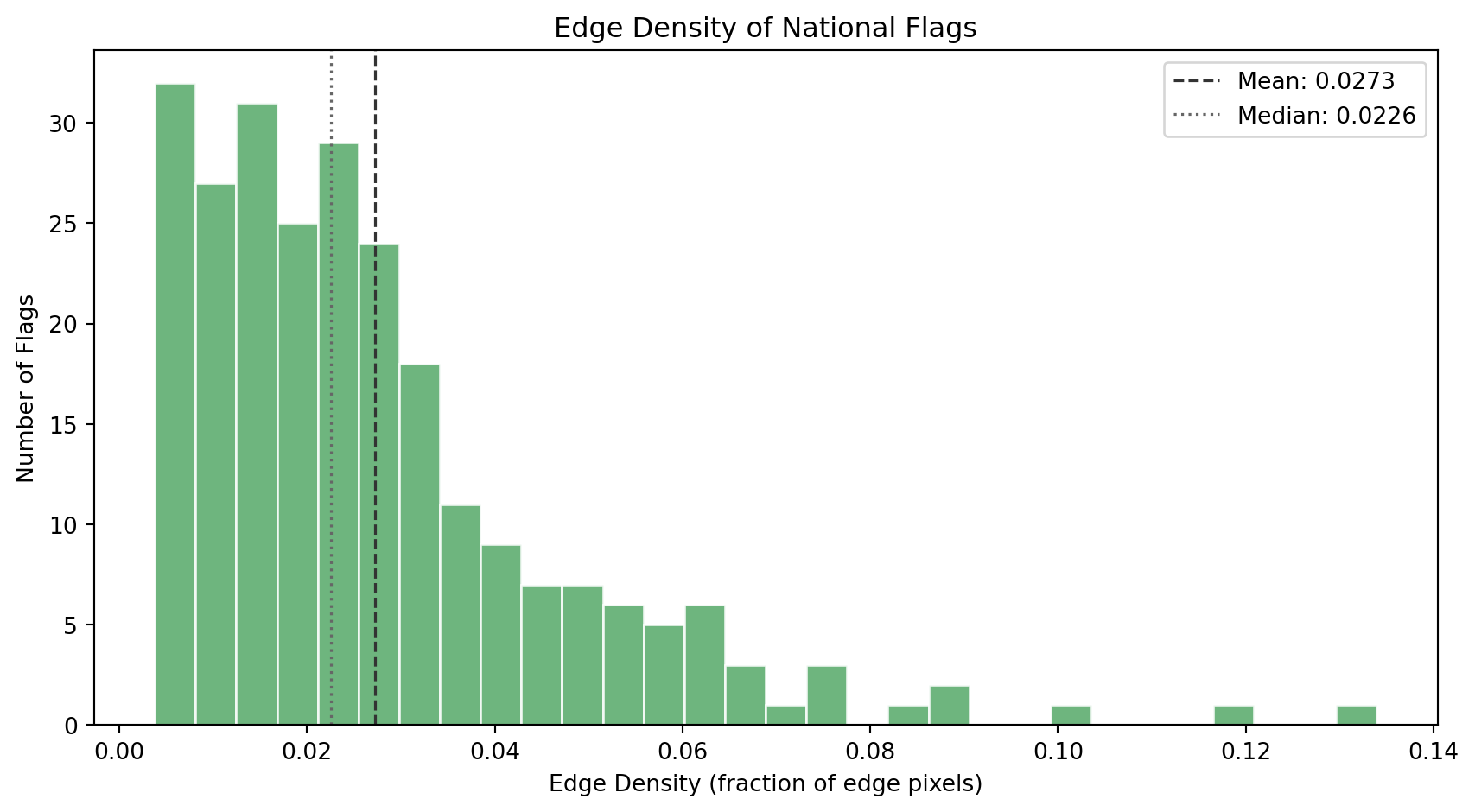

### Edge Density

Edge density tells us how many *boundaries* and *contours* exist in the flag's design. The Canny edge detector finds pixels where brightness changes sharply, stripe boundaries, emblem outlines, text contours, and ornamental detail.

```{python}

#| label: edge-histogram

#| code-summary: "Distribution of edge density across flags"

#| fig-cap: "Distribution of edge density (fraction of pixels detected as edges). Flags cluster in the low range: most designs are geometrically clean. A long right tail captures flags with detailed emblems, coats of arms, and ornamental borders."

fig, ax = plt.subplots(figsize=(9, 5))

ax.hist(df_visual["edge_density"], bins=30, color="#55A868",

edgecolor="white", alpha=0.85)

ax.axvline(df_visual["edge_density"].mean(), color="#333",

linestyle="--", linewidth=1.2,

label=f"Mean: {df_visual['edge_density'].mean():.4f}")

ax.axvline(df_visual["edge_density"].median(), color="#666",

linestyle=":", linewidth=1.2,

label=f"Median: {df_visual['edge_density'].median():.4f}")

ax.set_xlabel("Edge Density (fraction of edge pixels)")

ax.set_ylabel("Number of Flags")

ax.set_title("Edge Density of National Flags")

ax.legend(loc="upper right")

plt.tight_layout()

plt.show()

```

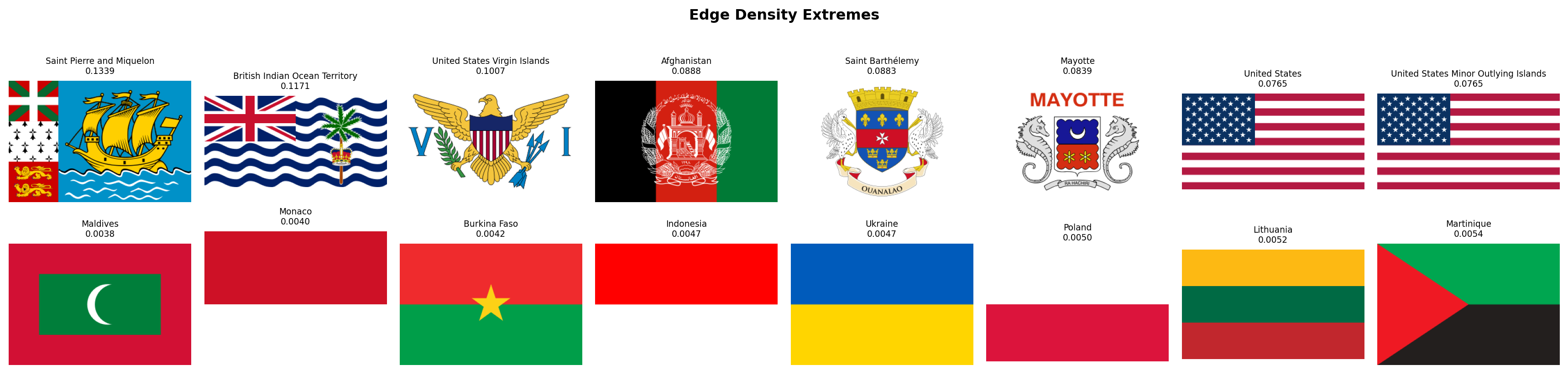

```{python}

#| label: edge-extremes

#| code-summary: "Flags with the highest and lowest edge density"

#| fig-cap: "Top row: the 8 flags with the highest edge density. These are the most geometrically detailed designs in the world's flag corpus: coats of arms, text, animals, intricate heraldic devices. Bottom row: the 8 flags with the lowest edge density. These are the cleanest, most minimal designs -- often bicolors or single-field flags with very few boundaries."

fig, axes = plt.subplots(2, 8, figsize=(18, 4.5))

for row_idx, (subset, title) in enumerate([

(df_visual.nlargest(8, "edge_density"), "Most Edges"),

(df_visual.nsmallest(8, "edge_density"), "Fewest Edges"),

]):

for col_idx, (_, flag_row) in enumerate(subset.iterrows()):

ax = axes[row_idx, col_idx]

img = rasterize_flag(flag_dir / f"{flag_row['code']}.svg", width=320)

ax.set_facecolor("#f0f0f0")

ax.imshow(img, aspect="equal")

ax.set_title(f"{flag_row['name']}\n{flag_row['edge_density']:.4f}", fontsize=7)

ax.axis("off")

axes[row_idx, 0].set_ylabel(title, fontsize=9, rotation=0, labelpad=65, va="center")

plt.suptitle("Edge Density Extremes", fontsize=12, fontweight="bold", y=1.01)

plt.tight_layout()

plt.show()

```

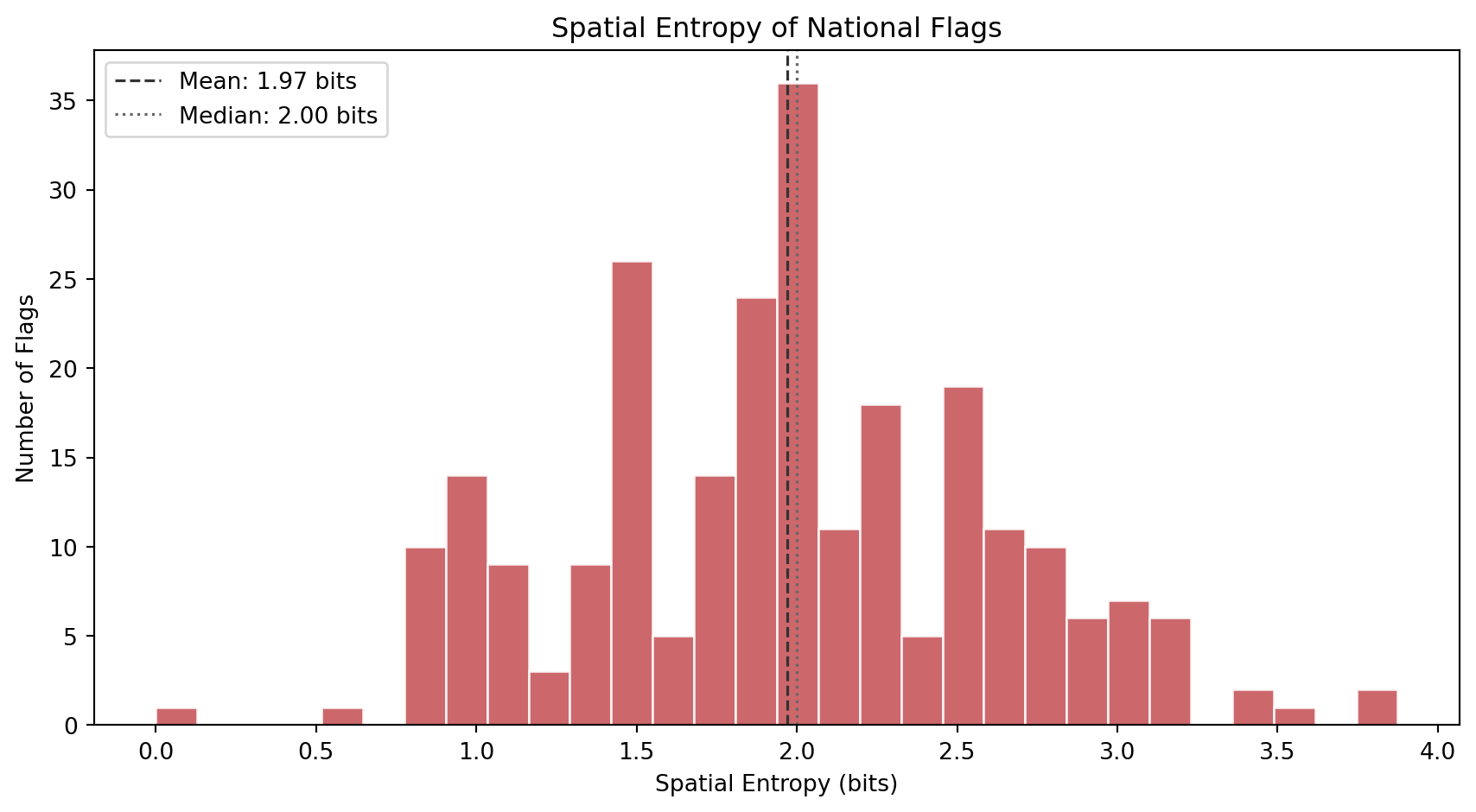

### Spatial Entropy

Where does the complexity *live* inside the flag? Spatial entropy answers this by dividing each flag into a 4x4 grid of cells, computing the mean color of each cell, and measuring how diverse those 16 cell colors are. A flag where all 16 cells have the same color (solid field) scores near zero. A flag where every cell is a different color (like a busy patchwork) scores high.

This metric distinguishes two flags that might have identical visual entropy but very different spatial structures. The USA has stars and stripes distributed across the entire flag (high spatial entropy). Laos has a central circle on a solid background (low spatial entropy). Both might have similar grayscale complexity, but their spatial distribution of detail is very different.

```{python}

#| label: spatial-histogram

#| code-summary: "Distribution of spatial entropy across flags"

#| fig-cap: "Distribution of spatial entropy (entropy of the 4x4 grid color distribution). Low values indicate uniform or single-element designs where most cells share the same color. High values indicate patterned or multi-region designs where the 16 grid cells show diverse colors."

fig, ax = plt.subplots(figsize=(9, 5))

ax.hist(df_visual["spatial_entropy"], bins=30, color="#C44E52",

edgecolor="white", alpha=0.85)

ax.axvline(df_visual["spatial_entropy"].mean(), color="#333",

linestyle="--", linewidth=1.2,

label=f"Mean: {df_visual['spatial_entropy'].mean():.2f} bits")

ax.axvline(df_visual["spatial_entropy"].median(), color="#666",

linestyle=":", linewidth=1.2,

label=f"Median: {df_visual['spatial_entropy'].median():.2f} bits")

ax.set_xlabel("Spatial Entropy (bits)")

ax.set_ylabel("Number of Flags")

ax.set_title("Spatial Entropy of National Flags")

ax.legend(loc="upper left")

plt.tight_layout()

plt.show()

```

```{python}

#| label: spatial-extremes

#| code-summary: "Flags with the highest and lowest spatial entropy"

#| fig-cap: "Top row: the 8 flags with the highest spatial entropy. These designs distribute complexity across the entire flag surface. Bottom row: the 8 flags with the lowest spatial entropy. These are the most spatially uniform designs, where nearly all grid cells share the same dominant color."

fig, axes = plt.subplots(2, 8, figsize=(18, 4.5))

for row_idx, (subset, title) in enumerate([

(df_visual.nlargest(8, "spatial_entropy"), "Most Distributed"),

(df_visual.nsmallest(8, "spatial_entropy"), "Most Uniform"),

]):

for col_idx, (_, flag_row) in enumerate(subset.iterrows()):

ax = axes[row_idx, col_idx]

img = rasterize_flag(flag_dir / f"{flag_row['code']}.svg", width=320)

ax.set_facecolor("#f0f0f0")

ax.imshow(img, aspect="equal")

ax.set_title(f"{flag_row['name']}\n{flag_row['spatial_entropy']:.2f} bits", fontsize=7)

ax.axis("off")

axes[row_idx, 0].set_ylabel(title, fontsize=9, rotation=0, labelpad=65, va="center")

plt.suptitle("Spatial Entropy Extremes", fontsize=12, fontweight="bold", y=1.01)

plt.tight_layout()

plt.show()

```

### Complexity Landscape

Let's combine all three metrics into a single view. The scatter plot below maps each flag in the space of edge density vs visual entropy, with spatial entropy encoded as marker color. This reveals how the three complementary dimensions of complexity relate to each other.

```{python}

#| label: complexity-landscape

#| code-summary: "Interactive scatter: edge density vs visual entropy, colored by spatial entropy"

#| fig-cap: "Each dot is a flag. X-axis: visual entropy (grayscale information content). Y-axis: edge density (geometric detail). Color: spatial entropy (how distributed the complexity is). Flags in the upper-right are both information-rich and edge-dense -- the most visually complex designs in the world."

fig = px.scatter(

df_visual, x="visual_entropy", y="edge_density",

color="spatial_entropy",

color_continuous_scale="magma",

hover_name="name",

hover_data={"visual_entropy": ":.2f", "edge_density": ":.4f",

"spatial_entropy": ":.2f"},

labels={"visual_entropy": "Visual Entropy (bits)",

"edge_density": "Edge Density",

"spatial_entropy": "Spatial Entropy (bits)"},

title="The Complexity Landscape of National Flags",

opacity=0.75, width=800, height=600,

)

fig.show()

```

### Palette Complexity Meets Visual Complexity

How does Family 2's `palette_complexity` (number of distinct color clusters) relate to Family 3's visual complexity? Flags with detailed coats of arms should score high on both: they have many colors *and* many edges. But clean geometric designs can have high color variety without high edge density (think of the South African flag: 6 colors, very few edges). This cross-family scatter tests whether color complexity and geometric complexity are redundant or complementary.

```{python}

#| label: palette-vs-edge

#| code-summary: "Interactive cross-family scatter: palette complexity vs edge density"

#| fig-cap: "Each dot is a flag. X-axis: palette complexity (Family 2). Y-axis: edge density (Family 3). Color: visual entropy (Family 3). The positive correlation confirms that flags with more colors also tend to have more edges, but the scatter is wide -- many flags with 3-4 colors span the full range of edge density, showing that the two metrics capture genuinely different design dimensions."

df_cross_visual = df_complexity[["code", "name", "palette_complexity"]].merge(

df_visual[["code", "visual_entropy", "edge_density", "spatial_entropy"]], on="code"

)

corr = df_cross_visual["palette_complexity"].corr(df_cross_visual["edge_density"])

fig = px.scatter(

df_cross_visual, x="palette_complexity", y="edge_density",

color="visual_entropy",

color_continuous_scale="viridis",

hover_name="name",

hover_data={"palette_complexity": True, "edge_density": ":.4f",

"visual_entropy": ":.2f"},

labels={"palette_complexity": "Palette Complexity (Family 2)",

"edge_density": "Edge Density (Family 3)",

"visual_entropy": "Visual Entropy (bits)"},

title=f"Color Complexity vs Geometric Complexity (Pearson r = {corr:.3f})",

opacity=0.75, width=800, height=600,

)

fig.update_layout(xaxis=dict(dtick=1))

fig.show()

```

### Discussion

Several findings emerge from Family 3.